📌 Key Takeaways

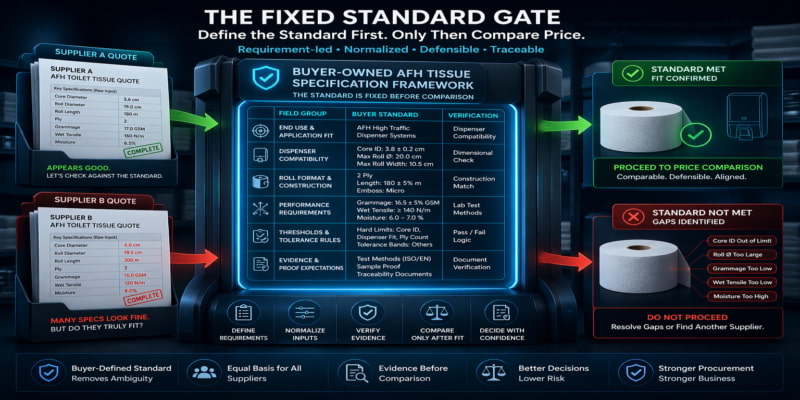

Clear supplier decisions start with buyer-defined requirements, not generic labels that let suppliers guess what “fit” means.

- Define Fit First: Set the standard before quotes arrive, or price becomes the only easy comparison.

- Use Six Field Groups: Define end use, dispenser fit, roll format, performance, thresholds, and proof requirements.

- Normalise Before Comparing: Convert every supplier response into one common review grid and flag missing data clearly.

- Be Precise Where It Matters: Lock hard limits for critical fields and use tolerance bands where flexibility is safe.

- Keep Procurement And QA Aligned: One shared test method lets commercial review and technical proof work from the same standard.

- Verify After Requirements: Samples, audits, and test reports only matter after the buying team defines what counts as fit.

Clear requirements turn look-alike quotes into comparable offers and make supplier decisions easier to defend.

Procurement leads and QA teams evaluating away-from-home (AFH) toilet tissue suppliers will gain a cleaner decision framework here, preparing them for the detailed overview that follows.

~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~

When Two Quotes Tell You Nothing

Two supplier offers are open on the screen. Both say “2-ply recycled or virgin fiber tissue, 500-sheet count, 45mm core diameter.” The price difference is real. One supplier is familiar. The other came in through a recent market review.

Which one is actually fit for this contract?

Across the table, QA is going to ask about wet strength performance in the healthcare wing. Operations will ask whether the rolls will clear the dispenser housings in the school block. Finance will ask why one option costs 12% more. And the procurement lead — the person who called this meeting — does not yet have a clean answer to any of it.

This is not a supplier problem. It’s a specification problem. The two offers look similar because the inquiry that generated them asked for similarity, not fit. The requirement set was incomplete before the first quote request went out, which means every answer that came back is filling a different version of the same gap.

There’s a better way to run this. It starts before the first inquiry is written — with a buyer-owned specification framework that defines what fit means, in writing, before supplier comparison begins.

Think of it as a blueprint. A builder doesn’t ask three contractors what they think the house should look like and then compare their visions. The blueprint exists first. Every bid is evaluated against it. The comparison is only meaningful because the standard is fixed. The same principle applies here: evaluation must be anchored to a fixed specification rather than reacting to supplier variability.

This guide builds that blueprint for AFH toilet tissue procurement.

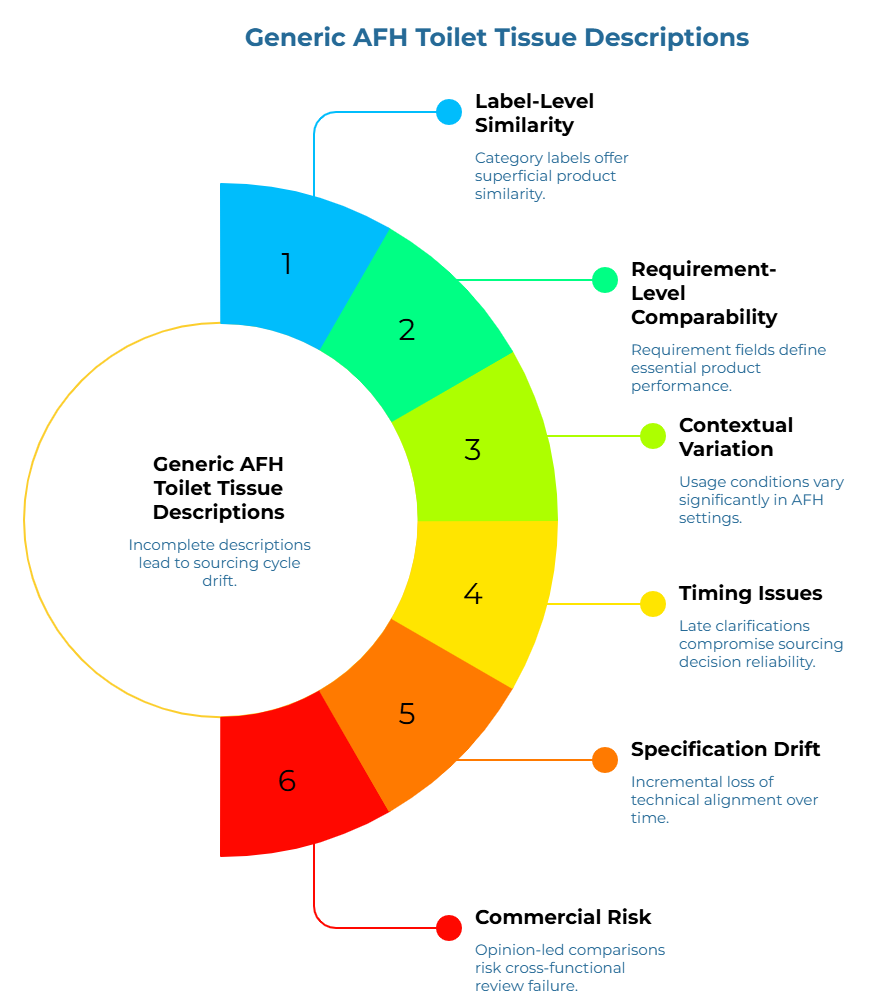

Why Generic AFH Toilet Tissue Descriptions Fail in Real Supplier Comparisons

A category label is not a requirement field. That distinction is the root of the problem — and where most AFH toilet tissue sourcing cycles start to drift.

When a sourcing team sends an inquiry using a category label (“2-ply, 500 sheets, standard”), each supplier fills in the unstated gaps according to their own product range, their own interpretation of “standard,” and sometimes their best guess at what the previous order looked like. Roll diameter, sheet dimensions, core inside diameter, ply bond construction, grammage per ply, wet tensile performance — none of these are locked down. None of them are defined by the buyer. They’re defined, implicitly, by whoever is quoting.

By the time the offers arrive, they look comparable on the surface. They are not.

This is the core distinction that matters: the difference between label-level similarity and requirement-level comparability. A category label describes what a product is called. A requirement field defines what it must do, to what standard, within what measurable range, in what specific end-use context. Without requirement fields, a procurement team isn’t comparing suppliers — it’s comparing each supplier’s interpretation of an incomplete brief.

The problem is especially acute in AFH settings because usage conditions vary so significantly between deployment environments: a hospital corridor running 24-hour dispenser traffic has completely different performance demands than a boutique hotel bathroom where sheet presentation is a guest-experience signal. A school restroom block in a high-volume education facility operates differently from a public transport interchange. What counts as “fit” shifts with the context — and no generic label captures any of that variation.

There’s a timing problem too. When the gaps in the requirement set only become visible after quotes have been scored, shortlisted, or provisionally approved, the clarification questions that follow don’t arrive in a neutral moment. One side is now protecting a price. The other is trying to close a specification gap that shouldn’t have existed. The answers become less reliable, the comparison becomes less defensible, and the internal team is left trying to explain a sourcing decision built on a foundation that was never solidly defined.

This is what late clarification does. It creates specification drift — the incremental loss of technical alignment between the buyer’s operational needs and the supplier’s proposed performance data, where different stakeholders have quietly filled the same gaps differently, and no one realizes it until something goes wrong after rollout. The longer vague inputs stay in the process, the harder it becomes to tell whether the team is comparing products, interpretations, or simply presentation quality.

The commercial risk isn’t just a weak technical fit. Opinion-led comparisons — sourcing decisions built on incomplete requirement language — rarely survive cross-functional review cleanly. If the team cannot explain why one offer is operationally better than another, the selection logic remains vulnerable from the moment it’s documented.

Generic labels don’t just create ambiguity. They create the appearance of resolution. A complete-looking quote set built on an incomplete requirement set feels more settled than it is — right up until it isn’t.

What a Buyer-Owned AFH Toilet Tissue Specification Method Actually Is

A buyer-owned AFH toilet tissue specification method is a structured requirement document that the buying organization defines — independently and before suppliers are engaged — and that becomes the fixed standard against which every incoming offer is evaluated.

That definition has three parts worth unpacking.

“Buyer-owned” means the requirement definition is the buying team’s work. Not something extracted from supplier marketing materials, inherited from a distributor catalog, or copied from a previous cycle’s intake form. Suppliers provide product data, samples, and certificates. The buying organization provides the standard those inputs are measured against. That distinction is the difference between a criteria-led evaluation and an opinion-led one.

As a problem-solving method, this approach creates the structure needed before price enters the conversation. When teams skip it, price becomes the only available basis for comparison — which is how procurement decisions become hard to defend, both internally and to the operations teams that inherit them.

As a process control layer, it sits at the front end of the supplier evaluation cycle: defined before the first inquiry goes out, used to structure every quote review that follows, and handed to QA as the evidence standard for verification. Procurement evaluates commercial fit against it. QA evaluates proof quality against it. Both functions work from the same document.

The method doesn’t replace commercial judgment. Lead times, supplier track record, supply chain redundancy, and price all matter. What it does is ensure that commercial judgment gets applied to suppliers who have already cleared a buyer-defined fitness standard — not suppliers who wrote persuasive data sheets.

That’s the difference between a quote-ready specification method and a loose product request. One produces criteria-led comparison. The other produces avoidable debate. The standard is set before the sourcing cycle opens. Suppliers respond to specific technical parameters, ensuring that proposals remain within the buyer’s operational guardrails.

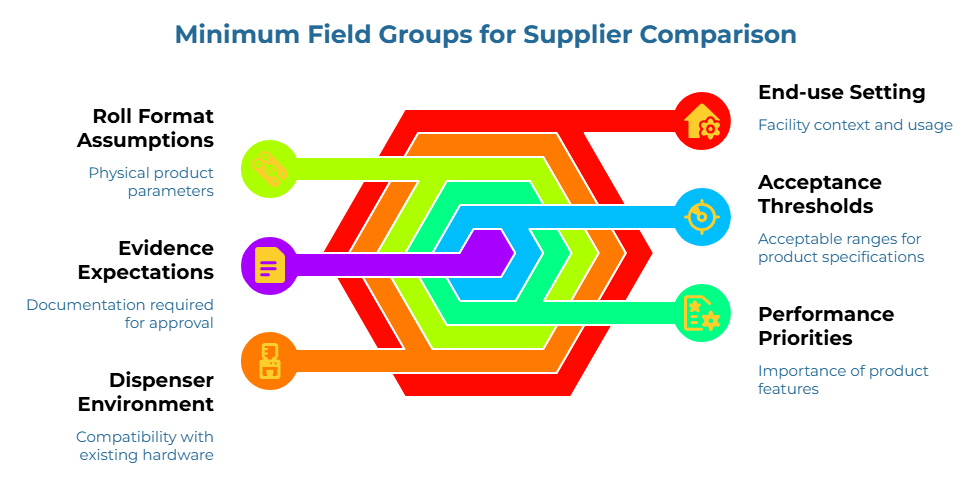

The Minimum Field Groups Procurement and QA Must Define Before Comparing Suppliers

A workable AFH toilet tissue specification method doesn’t require exhaustive technical depth from day one. It requires the right categories of requirements to be named and agreed on before the first quote request is written. Six field groups cover the practical minimum.

End-use setting. What type of facility is this product going into — and what does daily usage actually look like there? The answer is a first-order input, not background color. Healthcare environments with high-frequency dispenser use and clinical hygiene expectations place different demands on sheet integrity than a corporate office washroom with moderate traffic. Public institutions, hospitality properties, education campuses, jan-san distribution chains serving multiple site types — each context shapes which performance fields become critical and which become secondary. The exact weighting varies by site and operating model, but the governing logic is stable: defining the end-use setting first prevents the framework from being built around assumptions that don’t match the facilities being served.

Dispenser environment. What dispenser hardware is already deployed, and what compatibility parameters does it impose? Roll outside diameter, core inside diameter, sheet width, and sheet length must be stated in millimeters (mm) against actual dispenser tolerance ranges. This field group is often the fastest route to eliminating structurally incompatible offers — and one of the fastest ways to reduce false equivalence between offers that look similar on paper. A roll that physically cannot fit the installed dispenser hardware isn’t a viable offer at any price point. Stating these parameters before soliciting quotes removes a category of ambiguity that routinely survives into shortlisting.

Roll format assumptions. This covers the parameters that define the physical product: sheet count per roll, number of plies, ply construction method (embossed, laminated, or bonded), roll outside diameter, and format type (standard, jumbo, or mini-jumbo). These must be defined by the buyer, not left for suppliers to self-specify. When roll format assumptions are open, offers that look structurally comparable may differ by meaningful margins on parameters that affect dispenser performance, replenishment scheduling, and storage logistics.

Performance priorities. What does the product need to do under real AFH usage conditions — and in what order of importance? Dry tensile strength, wet tensile strength (particularly relevant in healthcare or high-humidity environments), and resistance to premature tearing under dispenser load are the practical performance fields for most AFH applications. These don’t need to be stated as precise test figures at the inquiry stage. But they do need to be acknowledged as ranked priorities, so suppliers understand which properties the evaluation will weigh most heavily. If performance priorities are left unnamed, suppliers will decide the hierarchy themselves. The current active version of ISO 12625-5 provides a standardized basis for wet tensile strength determination — a useful reference for structuring consistent supplier data requests in performance-critical environments.

Acceptance thresholds. Data without a defined acceptance standard is just data. What is the acceptable grammage range per ply, expressed in grams per square meter (gsm)? What roll diameter or sheet count variance is tolerable? Where are the hard limits, and where are the acceptable ranges? Thresholds don’t have to be finalized before the first quote cycle, but the team needs internal agreement that thresholds exist and will be applied consistently. A clear distinction between fields that act as hard gates, fields that allow a reasonable band, and fields that matter for ranking but not approval is often enough to prevent avoidable internal drift. ISO 536 defines the standard method for grammage determination, giving buyer and supplier a shared measurement basis.Standardized moisture content determination—typically conducted via oven-drying methods outlined in broadly applicable paper and board standards like ISO 287 or equivalent regional methodologies—is relevant to roll stability in transit and storage, and to soft consistency in use.

Evidence expectations. What documentation will be required before a supplier is approved? Test reports from accredited third-party laboratories, product samples traceable to production batches, and relevant sustainability or quality management certificates are standard expectations for a credible AFH supplier evaluation. Stating these expectations before the sourcing cycle opens prevents the situation where one supplier provides comprehensive third-party test data and another provides only a product data sheet — and the buyer has no pre-established basis for treating the gap as disqualifying. Where measurable properties matter, standards-based terminology reduces ambiguity. ISO 12625-1 provides the vocabulary framework for tissue paper and tissue products, which supports consistent, unambiguous communication of evidence requirements across supplier correspondence.

These six field groups are the minimum. A buying team that has worked through all of them owns a genuine comparison standard. A team that hasn’t is evaluating product descriptions, not fitness for use.

How to Normalize Mismatched Supplier Inputs into One Common-Basis Requirement Set

A supplier input can be complete without being comparable. That principle — completeness is not comparability — is the reason normalization exists as a distinct step between defining a specification method and running a live quote review.

Even with a well-defined method in place, incoming supplier quotes will arrive in different formats. One supplier uses metric units throughout. Another quote in imperial equivalents. One provides test data organized by product code. Another provides a single multi-product data sheet that covers 23 SKUs and requires extraction. Some include moisture content values; others don’t acknowledge the field at all.

This is not a supplier quality problem. It’s the structural reality of sourcing across a global market where data formatting isn’t standardized. The buyer’s job — the middle step that most teams skip — is normalization.

A 14-page supplier data pack is not inherently more useful than a 4-page one if neither maps to the six field groups the buying team defined. Normalization means extracting what’s relevant, flagging what’s missing, and creating a common review grid that applies equally to every offer on the table.

In practice, normalization follows four moves.

- Translate supplier language into buyer language. Every supplier will describe its offer through its own structure, emphasis, and terminology. The first step is bringing those claims back into the buyer’s field groups rather than letting each supplier define the review frame. For every requirement field, the reviewer enters either the supplier’s stated value (converted to the buyer’s units where necessary), a note that the data was provided in a format requiring clarification, or a clear gap marker.

- Separate matched fields from partially matched fields. “Close” is not the same as “aligned.” A field that only partly maps to the requirement should stay visible as a gap, not disappear into a broad pass judgment. Two offers both stating “2-ply, 500 sheets” may differ by 15% in grammage per ply, have non-interchangeable roll diameters, and use different ply bonding methods — all of which have operational consequences in AFH deployment. Without field-by-field review, those differences stay invisible until complaints surface after rollout.

- Treat missing fields as unresolved, not irrelevant. This is where many comparisons go wrong. Missing information often gets silently downgraded instead of flagged. That makes one supplier look cleaner only because its unknowns are not being named. Gap markers aren’t immediate disqualifications — they’re action items. The supplier may be able to provide the missing data. But it’s the buyer’s standard that determines what data is required, not the supplier’s willingness to provide it.

- Run the first real comparison only after common-basis mapping is done. At that point, the discussion becomes more disciplined. The team is no longer comparing supplier storytelling. It is comparing supplier responses against a shared requirement structure.

This is what buyer-owned definitions actually do in practice. When the method defines “sheet grammage” as a specific range in gsm, measured per ISO 536, both buyer and supplier know exactly what data is expected and in what form. When dispenser compatibility is stated with named hardware parameters in millimeters, a supplier can’t submit a specification in a format that sidesteps the question. The buyer’s language governs the review.

The output of normalization is a single document where every supplier offer has been evaluated against the same buyer-defined standard. That document is what defensible supplier comparison actually looks like — and it only exists because the buyer defined the standard first.

This process does not have to slow procurement. In many cases, it does the opposite. A lean normalization step reduces later rework because it prevents Procurement, QA, and Operations from rediscovering the same missing fields in different meetings. The real time loss in most sourcing cycles comes from unclear requirements, not from controlled structure.

Where Requirement Precision Protects Quality — and Where It Just Creates Friction

Not every field in the method deserves the same level of precision. Getting this wrong in either direction creates problems. Under-specification leaves gaps that suppliers fill differently. Over-specification shrinks the qualified supplier pool unnecessarily and generates procurement friction that slows the cycle without adding proportional quality assurance value.

The practical discipline is distinguishing between critical fields and tolerance-band fields — and being able to explain that distinction clearly to non-technical stakeholders.

Critical fields are those where deviation directly affects end-use fitness. Core diameter is critical when the dispenser hardware is fixed — a roll that doesn’t fit isn’t a viable option at any specification level. Wet tensile strength thresholds are critical in healthcare or high-moisture environments where sheet integrity under wet conditions is a documented operational requirement. Sheet count per roll may be critical when dispenser capacity and replenishment scheduling are tightly managed across a large estate. These fields are binary: the requirement is either met or it isn’t, and the consequences of missing it are immediate and visible.

Tolerance-band fields are those where a defined acceptable range, rather than a precise target value, better reflects operational reality. Grammage stated as a range — a minimum base weight with an acceptable upper tolerance — is more practical for global sourcing than a single target number that few suppliers’ standard products will match exactly. Roll diameter expressed as a target with a defined variance allows suppliers to offer products that work within the dispenser’s physical tolerance without requiring a precision match to an internally generated figure. These fields don’t need hard pass/fail thresholds. They need bounds.

Teams should also resist pseudo-precision. If the organization has not defined a validated numeric band for a particular field, it is better to acknowledge that the field requires tighter control than to invent an exact tolerance. Practical procurement discipline is not about sounding technical. It is about making the review more reliable.

The rule for setting precision levels is straightforward: specify tightly where end-use failure is costly, visible, or hard to remediate after rollout. Specify with tolerance ranges where operational reality supports flexibility. Over-prescribing low-impact fields doesn’t improve quality — it signals that the method was built from a technical wish list rather than from actual facility requirements.

When explaining precision decisions to colleagues in finance, operations, or senior management, the framing that works is this: some fields are constraints (the dispenser either accepts the roll or it doesn’t), and some fields are preferences with acceptable bands (softness, grammage within a defined range). The method captures both. The distinction helps non-technical reviewers understand why one supplier’s minor deviation on a tolerance-band field doesn’t disqualify the offer, while a deviation on a critical field does.

Precision should rise where the cost of ambiguity is real. Everywhere else, clarity is enough.

How Procurement and QA Can Use the Same Method Without Needing the Same Priorities

One of the most consistent friction points in AFH tissue sourcing is the handoff between Procurement and QA. The functions enter the evaluation at different moments, with different primary concerns, and often working from different documents. The result is a review process where the same supplier offer gets assessed twice, against different implicit standards, producing conclusions that are hard to reconcile.

A shared specification method resolves this — not by forcing both functions to weigh the same fields identically, but by giving them a common review structure to work from.

What’s the difference? Procurement’s primary interest in the method is commercial defensibility. The framework enables a procurement manager to answer — clearly and on record — why one supplier was selected over the others. The answer lives in the normalized review grid. Field by field, the selected supplier met the buyer-defined requirements. The rationale is documented. It holds under scrutiny. Procurement doesn’t need to debate QA’s technical criteria; it needs the selection logic to be traceable.

QA’s primary interest is evidence integrity. Did the supplier provide test data that corresponds to the defined field requirements? Are test reports traceable to a recognized standard and issued by an appropriate testing source? Do product samples match the specification stated in the supplier’s data sheet? QA doesn’t need to reproduce Procurement’s cost analysis. It needs the requirement fields to be defined clearly enough that evidence can be evaluated against them without interpretation — a judgment call replaced by a documented standard.

The internal champion — typically the procurement lead or category owner — doesn’t adjudicate between these two sets of priorities. Their job is to ensure both functions are working from the same method document. A shared framework lowers the social risk that serious internal champions carry. Instead of pushing a preference, that person can circulate a framework. Instead of asking everyone to agree on instinct, they can ask everyone to react to named fields. The conversation shifts from “Which supplier seems better?” to “Which offer satisfies the criteria, where are the unresolved gaps, and what still needs clarification?”

When a sourcing decision comes under scrutiny from operations, finance, or senior leadership, the champion can present a requirement-grounded evaluation rather than a judgment call. The method is the evidence. The normalized comparison is the output. The decision follows from both — and can be explained clearly to anyone in the room who wasn’t part of the original evaluation.

Procurement wants comparability. QA wants fit assurance. Both are available inside one well-built method. The prerequisite is that the method gets built before supplier comparison begins, not assembled during it.

When the Method Should Hand Off into Supplier Verification

The specification method and the supplier verification process are sequential. They are not interchangeable, and they don’t run in parallel.

The method defines what fit means. Verification establishes whether a specific supplier can demonstrate that fit — with documented, traceable evidence. The handoff point between them is well-defined: once the method has been used to normalize incoming offers and identify the supplier or suppliers that meet the defined requirements, verification begins.

Verification is only meaningful after the method is in place. Without defined requirements, there’s nothing to verify against — especially when evaluating toilet tissue paper factories or toilet tissue raw material suppliers. A product sample is useful only if the buying team knows which property to assess and what range is acceptable. A test report is useful only if the team knows which standard was applied and whether the reported value falls within the defined threshold. A supplier audit is useful only if the audit protocol reflects the buying team’s specific requirement fields.

This is the sequential logic that governs defensible AFH supplier evaluation: the method comes first, verification follows, and the two stages share the same requirement language. Buyers who attempt to run verification before the method is complete are essentially auditing against an unfinished standard — which produces confidence in the wrong thing.

For teams evaluating converting partners or vertically integrated suppliers, understanding where parent roll specifications feed into finished AFH product performance is useful context for building credible downstream requirements. Bath tissue parent roll suppliers and toilet tissue mills represent the upstream input into finished tissue quality — and understanding that supply chain relationship helps buying teams ask better questions at the finished-goods specification level.

The downstream stages — remote specification verification, technical specification matching, and evidence pack evaluation — each depend on a completed, buyer-owned method as their input. Without it, verification and proof discipline are applied to a standard that was never fully defined.

A Practical Starting Matrix for the Next Quote Cycle

The following matrix is a simplified in-article preview of the AFH Toilet Tissue Specification Framework Matrix — a cross-functional working document built for Procurement and QA to use together. The full matrix separates mandatory requirement fields, tolerance notes, end-use assumptions, and verification checkpoints in a format designed to survive a cross-functional quote review.

The preview below is structured to be immediately usable. Fields marked as “buyer-specified” are placeholders that the buying team fills in based on actual dispenser hardware, facility type, and operational requirements. The method’s value comes from completing those fields — not from the structure alone.

AFH Toilet Tissue Specification Framework Matrix (In-Article Preview)

| Field Group | Requirement Field | Buyer-Defined Value / Range | Tolerance Note | End-Use Assumption | Verification Checkpoint |

| End-Use Setting | Facility type | Healthcare / Hospitality / Education / Public / Jan-san | — | Determines which performance fields are critical vs. secondary | Confirm with Facility Operations before method is finalized |

| Dispenser Environment | Core inside diameter | Buyer-specified in mm | ± buyer-defined mm per installed dispenser spec | Fixed-core dispensers require tighter tolerance | Supplier to confirm on product data sheet |

| Roll outside diameter | Buyer-specified in mm | ± buyer-defined mm | High-capacity dispensers may accept a wider band | Measure sample against installed dispenser hardware | |

| Sheet width | Buyer-specified in mm | ± buyer-defined mm | Dispenser sheet channel constraint | Confirm on production specification sheet | |

| Roll Format | Number of plies | 2-ply — confirm bonding method | Exact; no tolerance | Bonding method affects sheet integrity under load | State bonding method explicitly in inquiry |

| Sheets per roll | Buyer-specified | ± buyer-defined % | Affects replenishment scheduling across estate | Verify count on independently measured sample | |

| Roll format type | Standard / Jumbo / Mini-Jumbo | — | Must match installed dispenser format | Confirm against dispenser manufacturer specification | |

| Performance Priorities | Dry tensile strength (MD + CD) | Buyer-defined minimum | State test method reference | High-traffic AFH load conditions | Third-party test report from accredited laboratory |

| Wet tensile strength | Buyer-defined minimum | Per ISO 12625-5 | Critical in healthcare and high-humidity environments | Third-party test report required | |

| Sheet softness / tactile performance | Ranked priority: High / Medium | No hard value unless healthcare context | Guest-facing vs. functional use environments | Sample panel evaluation by end-use stakeholder | |

| Acceptance Thresholds | Grammage per ply (gsm) | Buyer-defined range | ± buyer-defined gsm per ISO 536 | Higher gsm supports sheet integrity in heavy-use settings | Third-party test report required |

| Moisture content | Buyer-defined maximum % | Per ISO 287 | Affects softness consistency and roll stability in storage | Certificate of conformance or test report | |

| Evidence Expectations | Test report source | Accredited third-party laboratory | — | — | Laboratory accreditation confirmation required |

| Sample correspondence | Production-representative sample | — | Sample must correspond to the proposed production batch | Confirm batch origin on delivery documentation | |

| Sustainability certification | FSC or equivalent if required | Certificate scope and geolocation data must cover the supplied product | Applies in sustainability-mandated and legally regulated procurement environments | Verify certificate scope against supplied SKU |

This matrix is not a supplier scorecard. It’s the pre-commercial structure that makes a supplier scorecard meaningful. A team that completes it before the first inquiry goes out has defined what “fit” means. Every incoming quote can be evaluated against a fixed standard. Every gap is identifiable. Every comparison is defensible — to QA, to finance, to operations, and to any senior stakeholder who asks why one supplier was chosen over another.

For a wider view of the bathroom tissue rolls landscape across AFH formats, browsing the available product categories helps teams understand the range of configurations they may encounter in supplier quotes and what variation to plan for in the requirement fields.

From Two Quotes to One Defensible Decision

Two offers were open on the screen at the start. Both featured identical generic labeling. The team couldn’t explain which one was actually fit — because the standard for “fit” didn’t exist yet.

That’s the real cost of specification-first procurement being skipped: it doesn’t show up as a single dramatic failure. It shows up as a review meeting where no one can answer the QA question cleanly, an operations complaint six weeks after rollout, or a procurement decision that gets second-guessed internally because the rationale was never documented in requirement language.

The buyer-owned specification method described here changes that sequence. It puts the standard before the comparison. It gives Procurement and QA a shared document rather than competing assumptions. It makes the selection logic traceable — so the internal champion can defend the decision, not just describe it.

Define requirements first. Normalize supplier inputs against them. Apply tolerance discipline. Hand off to verification only after fit is defined.

Clear requirements. Comparable offers. Defensible decisions.

Explore Related Resources

Browse bath tissue rolls for a product-category orientation across AFH formats and configurations.

Review toilet tissue jumbo roll suppliers when evaluating vertically integrated or converting partners as part of the supplier comparison process.

Referenced Standards

- ISO 536 — Paper and board — Determination of grammage

- ISO 287 — Paper and board — Determination of moisture content of a lot — Oven-drying method

- ISO 12625-1 — Tissue paper and tissue products — Part 1: Vocabulary

- ISO 12625-5 — Tissue paper and tissue products — Part 5: Determination of wet tensile strength

Disclaimer:

This content is for general informational purposes only and is not legal, technical, procurement, QA, or other professional advice. Suitability, compliance, and sourcing outcomes vary by site, supplier, product, and operating conditions.

Our Editorial Process:

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

About the PaperIndex Insights Team:

The PaperIndex Insights Team is our dedicated engine for synthesizing complex topics into clear, helpful guides. While our content is thoroughly reviewed for clarity and accuracy, it is for informational purposes and should not replace professional advice.