📌 Key Takeaways

A drop test report becomes usable procurement evidence only when it states the test method, exact configuration tested, drop schedule, and acceptance criteria—without these four elements, “PASSED” means nothing defensible.

- Configuration Mismatches Invalidate Everything: If the tested carton dimensions, board grade, cushioning, or closure differ from your RFQ specification, the report cannot qualify that supplier.

- Marketing Summaries Are Not Evidence: Lab-style reports include method, configuration, schedule, results, and criteria; one-page summaries with green checkmarks do not support approval decisions.

- Use the Twelve-Question Checklist: Validating method validity, configuration comparability, data completeness, and acceptance criteria prevents approving non-comparable reports from competing suppliers.

- Apply Three Qualification Gates: Gate 1 checks completeness, Gate 2 verifies evidence packs, Gate 3 triggers escalation—structured gates replace subjective judgment with repeatable decisions.

Comparable evidence beats vendor promises every time.

Procurement managers qualifying corrugated box suppliers for electronics packaging will gain a repeatable validation framework here, preparing them for the detailed checklist and gate system that follows.

The email satisfies exactly nobody. Subject line: “Drop Test – PASSED.” A single-page PDF. Company logo, green checkmark, no data. The risk is not the test itself—it is approving a report that cannot be defended later because the method, package build, or acceptance criteria are not comparable to what will actually ship.

You need to qualify this supplier for an electronics packaging contract using the same evidence-based methodology that prevents qualification failures across all corrugated box sourcing programs. The report offers a word—”passed”—but nothing defensible. No test standard. No configuration details. No way to compare it against the other three suppliers who also claim their boxes “passed.”

A drop test report is usable procurement evidence when it clearly states the test method, the exact product-and-packaging configuration tested, the drop schedule (heights, orientations, sequence), and the acceptance criteria used to declare a pass. If any of those elements are missing or mismatched, treat “PASS” as non-comparable and request an evidence-complete report or an aligned re-test. This guide breaks those elements down so procurement can validate completeness, compare suppliers on an apples-to-apples basis, and decide when to request additional testing or escalation to packaging engineering.

What a Drop Test Report Is (and What It Isn’t)

A drop test simulates vertical impacts that packages experience during handling and distribution: warehouse drops, conveyor transfers, loading and unloading. For corrugated box sourcing in electronics applications, these impacts determine whether products arrive intact or generate returns.

In practice, two very different artifacts get called “drop test reports.” Marketing-style summaries often provide a claim with limited detail—company letterhead, a pass stamp, perhaps a single photograph. This pattern repeats the same false economy documented in why ‘cheap’ boxes cost more — accepting incomplete evidence to accelerate approval creates damage expenses that dwarf any initial savings. Lab-style reports include the test method, exact configuration tested, drop schedule with heights and orientations, observed results, and defined acceptance criteria. Only the second type supports a defensible approval decision.

Drop testing fits within a broader packaging qualification system. It validates one hazard type—vertical impact—but doesn’t address compression, vibration, or environmental exposure. Total protection strategies integrate multiple validation methods to prevent the accountability gaps that occur when buyers treat passing one test as comprehensive approval. Procurement teams treating a single drop test as blanket approval misunderstand its scope.

Start with the Test Method and Scope

The first field to verify: which standard governed the test?

Recognized frameworks include ISTA test procedures developed by the International Safe Transit Association, ASTM D5276 covering free-fall drop testing of loaded containers, and ISO 2248 for vertical impact testing of complete transport packages. Regulatory contexts like hazardous materials packaging follow 49 CFR 178.603 requirements.

The standard must match actual distribution hazards. A test designed for lightweight parcel shipments won’t validate packaging intended for palletized freight with forklift handling. Confirm the test level or severity aligns with the shipping modes, handling intensity, and transit distances your supply chain involves.

Minimum field procurement should be: the standard or procedure name, test level designation, sample conditioning requirements (temperature, humidity, duration before testing), and the explicit pass/fail definition. Reports omitting the test standard warrant immediate clarification requests—not approval.

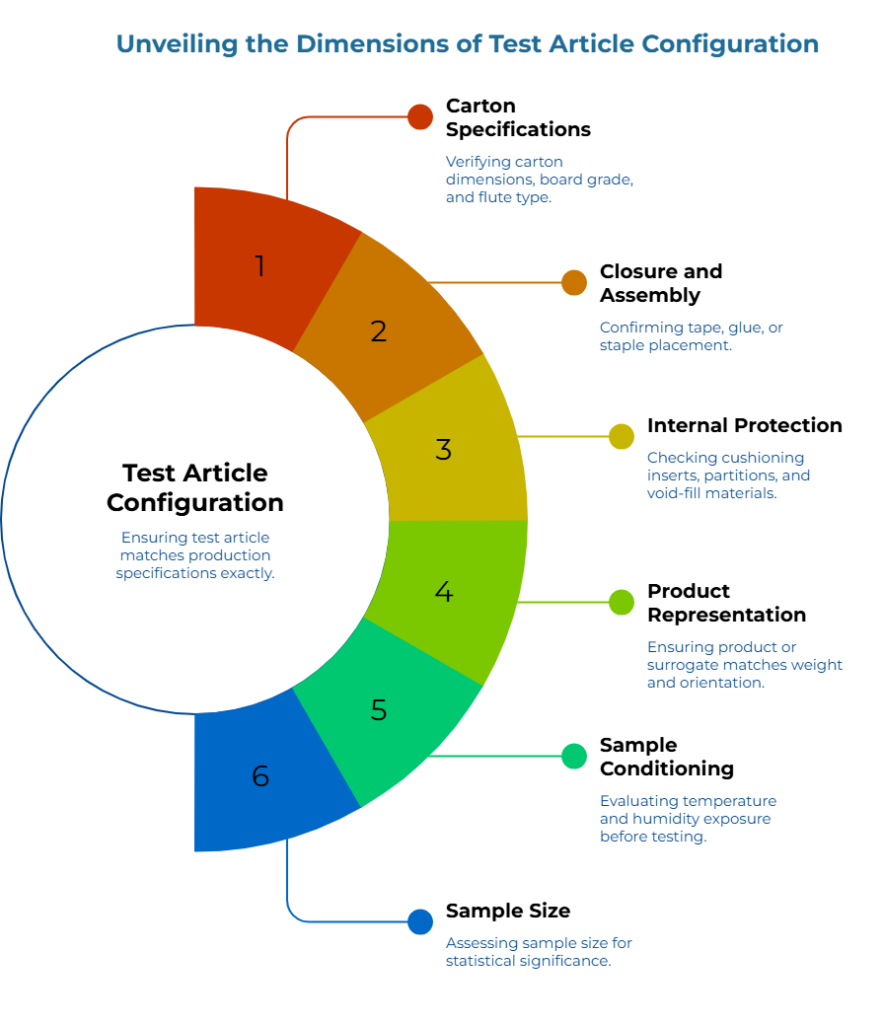

Confirm the Test Article Configuration

Configuration mismatches invalidate results entirely. A ‘passed’ report means nothing if the tested carton used different dimensions, heavier board grade, or cushioning inserts absent from production specifications. The same spec discipline that prevents qualification drift in ongoing supply also governs initial testing—what you test must match exactly what you’ll receive.

Verify alignment across these elements:

Carton specifications: Internal and external dimensions, board grade (such as 32 ECT or 200# burst), flute type (B, C, BC double-wall), and manufacturer if specified in your sourcing requirements.

Closure and assembly: Tape type and application pattern, glue specifications, or staple placement. Closure method affects corner and edge integrity under impact.

Internal protection: Cushioning inserts, molded pulp, foam blocks, corrugated partitions, and void-fill materials. For electronics, the cushioning system often determines pass/fail outcomes more than the outer carton.

Product representation: Actual product or test surrogate, weight distribution, orientation within the package, and any fragile component positioning.

Sample conditioning: Temperature and humidity exposure before testing. Conditioning affects board moisture content and cushioning performance.

Sample size matters. Single-sample tests carry inherent variability risk. Multi-sample protocols (three or five units) provide more defensible evidence. Note whether all samples used identical configurations—any variation between test articles undermines comparability.

Reports lacking specific material callouts (e.g., ’32 ECT’ or ‘2.0 pcf PE foam’) should be classified as development data rather than final qualification evidence.

Read the Drop Schedule: Height, Orientation, and Sequence

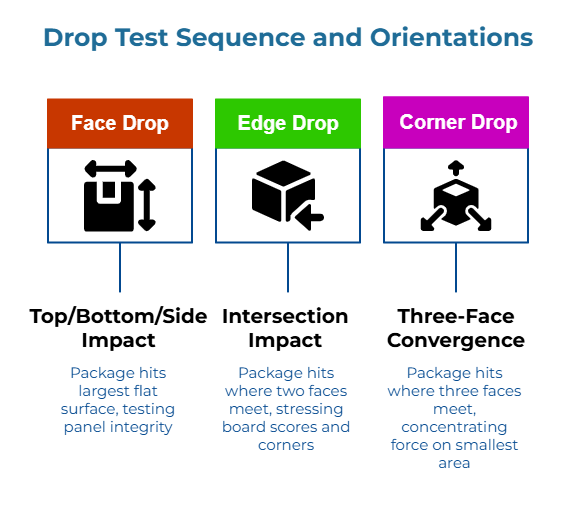

Drop tests subject packages to impacts from multiple orientations, each stressing the structure differently.

- Face drops impact the largest flat surfaces—top, bottom, or sides. These test panel integrity and closure strength but distribute force across a wide area.

- Edge drops impact the intersection where two faces meet. Force concentrates along a line, stressing board score lines and corner joints.

- Corner drops impact the point where three faces converge. Energy concentrates on the smallest contact area, creating the most severe localized stress. For corrugated boxes protecting electronics, corner drops typically represent worst-case conditions because they maximize stress on both carton structure and internal cushioning.

Confirm the report documents: drop height(s) (verified against the specified standard’s units, e.g., cm or inches), number of drops per orientation, the exact sequence performed, and whether fresh samples or the same samples underwent successive drops. Sequence matters—a package surviving a corner drop when fresh may fail the same impact after absorbing cumulative damage from earlier face and edge tests. Sequential testing on single samples reveals progressive failure modes that fresh-sample protocols miss.

Under-testing hides failure risk. Fewer drops, lower heights, or skipped orientations produce favorable results that won’t replicate in actual distribution.

Decode the Results: What the Numbers Mean

Results documentation takes two primary forms depending on test instrumentation.

Instrumented Testing

Instrumented tests use accelerometers mounted inside the package or on the product itself. These measure g-force (peak acceleration during impact) and shock pulse duration. The numbers matter when engineering has defined product fragility limits—the maximum acceleration a component can withstand without functional or cosmetic damage.

If a report shows 47 g peak acceleration and your product’s validated fragility limit is 40 g, the packaging failed to protect adequately regardless of visual appearance. Instrumented data enables objective comparison when acceptance criteria tie to specific g-force thresholds.

Visual-Only Testing

Visual-only reports document outcomes through photographs and inspection checklists. These reports should specify damage criteria explicitly: what constitutes cosmetic damage versus structural failure versus functional impairment, what inspection protocol was followed, and who performed the evaluation.

The comparability rule applies regardless of documentation method. Two suppliers both reporting “passed” provide non-comparable evidence if one tested at 76 cm drop height with 50 g acceptance while another tested at 60 cm with no instrumentation. Side-by-side evaluation necessitates identical methodologies, builds, and success thresholds.

Anatomy of a Drop Test Report: What to Find Fast

A procurement review is faster when reports follow a predictable structure. Complete reports typically contain six essential blocks:

- Cover / Identification: Report ID, test date, lab name (if applicable), sample count

- Test Method and Scope: Named procedure, selected level or options, conditioning parameters

- Test Article Configuration: Product and packaging build, photographs or diagrams, packaged mass

- Drop Schedule: Heights in metric units, orientations, sequence, number of drops per orientation

- Results and Observations: Package damage documentation, product condition checks, inspection notes

- Acceptance Criteria and Disposition: Written pass/fail rules and final result statement

If any block is missing, the report may still provide useful information—but it should not be treated as comparable qualification evidence.

Procurement Checklist: Twelve Questions to Validate a Supplier’s Drop Test Report

Before using any report for qualification decisions, work through these verification questions grouped by evaluation category.

Method Validity

- Does the report name a recognized test standard (ISTA, ASTM, ISO, or equivalent)?

- Does the specified test level match your distribution severity and handling environment?

- Are sample conditioning parameters documented (temperature, humidity, conditioning duration)?

Configuration Comparability

- Do tested carton dimensions match your sourced specification exactly?

- Does board grade and flute construction match?

- Are all cushioning materials, inserts, and void-fill documented and consistent with your specification?

- Does tested product weight match your actual product (or does the surrogate weight match)?

Data Completeness

- Are all drop heights, orientations, and the complete sequence documented?

- Is sample size stated, and is it adequate for the risk level (minimum three samples for high-value applications)?

- Does the report include photographic evidence or instrumented data supporting the pass/fail determination?

Acceptance Criteria and Accountability

- Are pass/fail criteria explicitly defined with measurable thresholds?

- Is the report signed or certified by a responsible party with a clear test date?

Red Flags Requiring Escalation

- Test standard missing or unrecognizable

- Configuration details absent, vague, or clearly mismatched to sourced specification

- Single-sample testing without documented justification

- No photographs, raw data, or damage documentation

- Pass/fail criteria subjective or undefined

- Unsigned report or missing test date

Any red flag warrants requesting additional documentation before qualification—not accepting the report provisionally.

Drop Test Glossary

| Term | Procurement Meaning | Where to Find It in Report |

| Test method / standard | The named procedure and version that governed the test | Cover page, “Method,” “Procedure” section |

| Test article | The exact product-plus-packaging configuration that was tested | “Package description,” configuration section, photographs |

| Acceptance criteria | The written rule defining pass or fail outcomes | “Criteria,” “Requirements,” “Disposition” section |

| Drop schedule | Heights in defined units (e.g., inches, cm, or meters), orientations, sequence, drop counts | “Schedule,” “Sequence,” drop table |

| Face drop | Impact on a flat face of the package; distributes force across large area | Orientation diagram or legend |

| Edge drop | Impact on an edge line where two faces meet; concentrates force along a line | Orientation diagram or legend |

| Corner drop | Impact on a corner point where three faces converge; often worst-case scenario | Orientation diagram or legend |

| G-force (peak acceleration) | Maximum deceleration during impact, expressed as multiples of gravity | “Instrumentation,” “Data,” results appendix |

| Impact velocity | Speed at which package contacts the drop surface; determined by drop height | “Instrumentation,” calculations, appendix |

| Shock pulse duration | Time span of the impact event in milliseconds | “Instrumentation,” “Pulse,” appendix |

| Sample conditioning | Pre-test exposure to controlled temperature and humidity | “Conditioning,” “Atmosphere,” test setup section |

| Damage criteria | Definitions specifying cosmetic vs. structural vs. functional damage | “Evaluation methodology,” inspection protocol section |

Using the Report in RFQs, Qualification Phases, and Supplier Selection

Validated reports become the foundation for evidence pack requirements in future corrugated box sourcing activities. The corrugated box RFQ checklist provides a structured framework for aligning procurement requirements with engineering specifications before supplier outreach.

RFQ specifications should define the required test standard and level, the exact configuration to be tested (matching your production specification), minimum sample size, required documentation elements (photographs, instrumented data if applicable, signed certification), and acceptance criteria aligned with your product’s fragility limits or quality thresholds.

Three-Phase Qualification Framework

Phase 1: Verification of Completeness Approve only if method, configuration, drop schedule, and acceptance criteria are explicit and aligned to the RFQ. Complete reports with comparable configurations proceed to Phase 2.

Phase 2: Artifact AlignmentRequire supporting artifacts—photographs, configuration diagrams, inspection logs—so the report remains auditable across stakeholders. Reports meeting documentation standards proceed to Phase 3.

Phase 3: Technical Escalation If any key element is mismatched (different box build, different cushioning, different closure, unclear criteria), require a re-test aligned to the RFQ configuration or escalate to packaging engineering for technical evaluation before any sourcing decision.

The corrugated box RFQ checklist provides a structured framework for aligning procurement requirements with engineering specifications before supplier outreach. The broader corrugated box sourcing framework shows how evidence packs and qualification phases integrate into a systematic supplier management approach that moves packaging from commodity purchasing to controlled input.

Expanding the Qualified Supplier Set

When current suppliers cannot provide comparable evidence—or when qualification requirements eliminate options from consideration—broadening the supplier pool becomes necessary.

Corrugated box suppliers listed on PaperIndex offer a starting point for identifying additional candidates. Browse corrugated boxes product listings to review available formats, specifications, and supplier capabilities.

Apply identical evidence requirements from initial outreach using the zero-trust sourcing model that treats every new candidate as unverified until documentation closes all five evidence gates. Request complete drop test reports as part of qualification submissions, validate comparability using the same checklist, and use qualification phases consistently across all candidates. The objective is building a qualified supplier set where every approval rests on evidence procurement can defend—not vendor claims that cannot be verified.

Disclaimer:

This content provides educational guidance for procurement professionals and does not constitute engineering advice. Consult qualified packaging engineers for product-specific testing requirements, fragility assessments, and regulatory compliance determinations.

Our Editorial Process:

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

About the PaperIndex Insights Team:

The PaperIndex Insights Team is our dedicated engine for synthesizing complex topics into clear, helpful guides. While our content is thoroughly reviewed for clarity and accuracy, it is for informational purposes and should not replace professional advice.