📌 Key Takeaways

Corrugated box sourcing failures trace back to system gaps—not supplier defects—and demand spec discipline, evidence verification, and staged qualification to prevent costly QC rejects.

- Specification Clarity Prevents Disputes: Name exact test methods (ISO 3037 for ECT, ASTM D642 for compression) with tolerances and acceptance criteria to eliminate supplier interpretation gaps.

- Normalize Quotes Before Comparing Prices: Align all suppliers on single Incoterms baseline, pack configuration, and landed cost components to reveal true door-to-door costs.

- Evidence Packs Convert Claims Into Proof: Require test reports with named methods, process controls documentation, and traceability artifacts before qualification—not marketing promises.

- Pilot Before Scaling Volume: Run three-gate qualification (sample approval, pilot run, controlled ramp) to verify consistent performance before awarding full production commitments.

- Monitoring Cadence Prevents Drift: Quarterly spec reviews, revalidation triggers, and documented exception workflows catch specification changes before they become quality failures.

System thinking transforms commodity purchasing into repeatable assurance.

Procurement managers and packaging engineers responsible for corrugated box sourcing will gain a proven five-control framework here, preparing them for the maturity assessment and implementation sequence that follows.

The warehouse floor. Pallets stacked high. A forklift operator waves you over.

Crushed corners on the boxes from yesterday’s shipment—the ones you awarded to the lowest bidder six weeks ago. The damage report lands on your desk before lunch. Operations wants answers. Finance wants to know who approved this supplier. And somewhere in a folder on your desktop sits a spreadsheet with three quotes that all looked “comparable” at the time.

This wasn’t supposed to happen. You followed the process. You got competitive bids. You chose the best price.

Here’s what that QC reject moment reveals: corrugated box sourcing isn’t a purchasing transaction—it’s an operating system. The shift from commodity to assurance requires transforming fragmented quotes into a governed sourcing program.

The boxes that just failed weren’t defective in isolation. They failed because somewhere in the chain of decisions—spec definition, quote evaluation, supplier qualification, or ongoing monitoring—a gap existed that no one documented, tested, or governed.

With a systematic assurance framework, you can identify these gaps before they become damage claims, build quotes that actually compare, and verify supplier capability before committing volume. The result isn’t just fewer rejected shipments. It’s procurement confidence backed by evidence rather than assumptions.

Why Corrugated Boxes Behave Like a System, Not a SKU

The instinct to treat corrugated packaging as a commodity makes sense on the surface. Boxes look similar. Suppliers quote in familiar units. Price differences seem to be the primary variable worth evaluating.

But corrugated performance emerges from the interaction of multiple factors, not from any single specification line. Understanding these factors reveals why box failures are rarely about one variable in isolation.

Design and pack configuration shape how loads transfer during handling. A box isn’t just dimensions—style (regular slotted carton, die-cut, wrap), internal fit, inserts, and closure method all influence stacking behavior and drop resistance. Pallet pattern, unitization, and void fill choices compound these effects. Two suppliers can quote the “same” box size and deliver very different in-transit outcomes because design intent was never fully specified.

Board properties determine baseline strength potential. Corrugated strength relates to liner and medium choices, flute profiles, and how those components work together. The right board for heavy appliances in long-haul lanes may be unnecessary—and wasteful—for lightweight consumer electronics shipped locally. Without explicit performance language, sourcing drifts toward whatever each supplier assumes is “standard.”

Conversion quality affects whether that baseline potential translates into repeatable performance. Even with appropriate board, scoring accuracy, joint quality, adhesive application, and dimensional consistency influence compression behavior and stacking alignment. When these controls are weak, variability rises—often showing up as intermittent failures that are hard to diagnose.

Distribution hazards provide the real-world stress test. Drops, vibration, humidity swings, long dwell times, and repeated handling are normal realities that corrugated performance must be framed against—not against a supplier’s best-case scenario. Organizations like ISTA provide distribution test procedure frameworks when teams need a shared vocabulary for lane realism.

This is why the QC reject moment rarely traces back to a single invoice line. The crushed corners on those boxes? Possibly a board grade issue. Possibly a conversion defect. Possibly a design mismatch with actual distribution hazards. Possibly all three, compounded by humidity exposure during a delayed shipment. Commodity thinking assumes these variables are someone else’s problem. Assurance thinking builds systems to govern them.

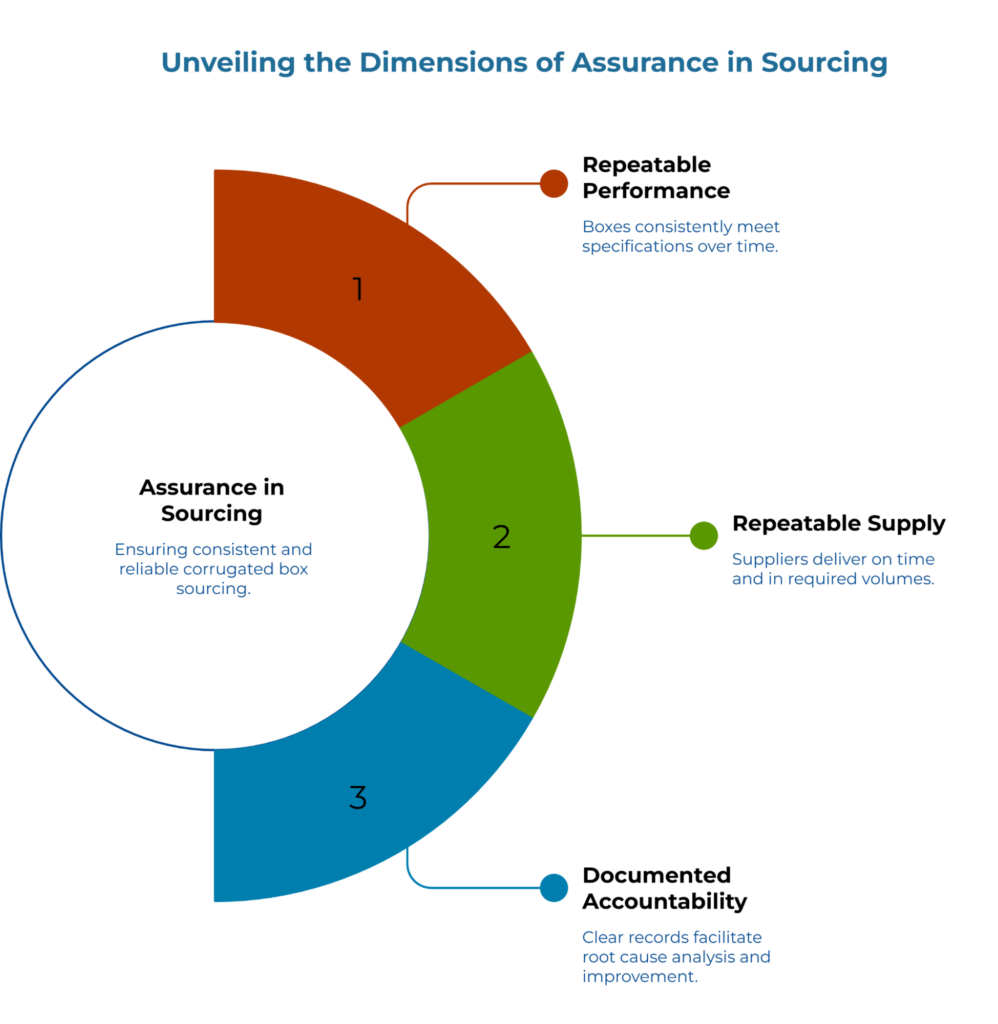

What “Assurance” Means in Sourcing

Assurance, in operational terms, means three things working together: repeatable performance + repeatable supply + documented accountability.

Repeatable performance requires that boxes meeting your specification today will meet it next month and next year—regardless of which production shift runs the order or which paper machine supplies the linerboard. This demands clear specs, yes, but also evidence that suppliers can hold those specs consistently.

Repeatable supply means your qualified suppliers can deliver when you need them, at the volumes you need, without surprise lead time extensions or allocation cuts. It requires understanding supplier capacity constraints and having contingency relationships documented before disruptions occur.

Documented accountability closes the loop. When something goes wrong—and eventually something always does—the paper trail makes root cause analysis possible. Without documentation, disputes become blame games. With it, they become improvement opportunities.

Building assurance requires five interlocking controls that form the backbone of strategic sourcing:

- The first control is spec discipline—defining performance requirements in language suppliers can quote against and you can verify upon receipt.

- The second is quote comparability—structuring RFQs so that responses actually compare, rather than mixing Incoterms, assumptions, and scope in ways that make the “lowest price” meaningless.

- Third comes evidence packs—requiring suppliers to prove capability claims with documentation rather than marketing language.

- Fourth, qualification gates ensure that new suppliers prove themselves at sample and pilot scale before receiving volume commitments.

- And fifth, monitoring cadence prevents the drift that occurs when initial qualification fades into memory while production continues unchecked.

Each control reinforces the others. Spec discipline makes quote comparability possible. Evidence packs inform qualification decisions. Monitoring catches drift before it becomes a QC reject. Remove any one, and gaps open for the kind of failure that started this conversation.

Spec Discipline: Choosing the Right Performance Language

Specification clarity separates strategic sourcing from transactional purchasing. Vague specs invite interpretation—and suppliers will interpret in their favor, not yours.

The most common specification gap involves performance testing language. Two approaches dominate corrugated specifications: burst strength and edge crush test (ECT). Understanding when each applies prevents both over-specification (paying for performance you don’t need) and under-specification (discovering inadequate strength through field failures).

Matching test language to dominant hazards

Most sourcing breakdowns don’t come from selecting the ‘wrong’ metric—they come from using a metric without stating what it protects against. Understanding corrugated box flute and wall types helps translate protection needs into structural specifications. The key is to identify, in plain terms, the dominant hazard: “stacking load during warehousing,” “long-haul vibration,” “mixed parcel handling,” or “high humidity exposure.” Then align the test language accordingly.

Edge crush test (ECT) measures the board’s resistance to compression along its edge—the force direction that matters most when boxes stack on pallets. For applications where stacking strength determines success or failure, ECT provides more directly relevant data than burst strength. The shift toward ECT-based specifications in distribution packaging reflects this reality: most corrugated boxes fail from compression, not puncture. The ISO 3037 standard provides the internationally recognized methodology for edgewise crush resistance testing.

Burst strength (Mullen) measures the hydraulic pressure required to rupture the board face. While distinct from puncture testing, it correlates reasonably well with the board’s ability to withstand internal and external forces during rough handling. Legacy specifications often default to burst requirements because the test has existed longer and appears in older purchasing templates. For applications where puncture risk dominates—sharp-cornered contents, aggressive manual handling, exposure to pointed objects during transit—burst strength remains relevant.

Compression language addresses box-level performance. For electronics and appliances, compression failure is a common pathway to damage: a crushed corner can turn into a shifted product, a compromised seal, or a unit that cannot be sold as new. Where compression is a primary concern, ASTM D642 establishes the standard test method for determining compressive resistance of shipping containers. For selecting between test approaches based on end-use requirements, ASTM D5639 offers guidance on choosing between ECT-based and burst-based specifications.

Avoiding specs that are technically sound but operationally weak

A sourcing spec can be technically correct and still fail operationally if it leaves room for interpretation. Three practical additions reduce that risk:

Tolerances on critical dimensions, squareness, and key fold lines prevent the scenario where boxes technically meet nominal dimensions but don’t stack reliably or fit automated equipment.

Material controls clarify allowable substitutions and recycled-content assumptions where relevant. “32 ECT” means little if the supplier can freely substitute materials that meet the number but behave differently on your line.

Change notification discipline defines what triggers re-approval. Without this, suppliers may “optimize” their processes in ways that keep test numbers within spec while changing performance characteristics you depend on.

Beyond the test method choice, effective specifications include:

- Dimensional tolerances, not just nominal dimensions—a 12″ × 12″ × 12″ box with ±1/4″ tolerance differs meaningfully from one with ±1/8″ tolerance

- Flute designation (A, B, C, E, or combinations), since flute profile affects both compression strength and cushioning

- Board construction (single wall, double wall, specific liner and medium weights)

- Performance test requirements with named test methods, not just numbers

- Acceptance criteria defining what “pass” means—including sample size and acceptable variation

A specification reading “32 ECT single-wall C-flute” provides a starting point. A specification reading “32 ECT minimum (tested per TAPPI T 811 or ISO 3037), single-wall C-flute, selected in accordance with ASTM D5639, tested per lot with n=5 samples…” provides a standard you can actually enforce.

Quote Comparability: Prevent “Apples vs Oranges” Awards

Three quotes sit open on your screen. Supplier A quotes FOB their plant. Supplier B quotes CIF your warehouse. Supplier C quotes “delivered” (often loosely implying DAP or DDP under Incoterms® 2020) with no specific rule cited. The unit prices differ by 15%. Which is actually cheapest?

Without normalization, you cannot know. And the supplier with the lowest visible number may carry the highest true cost once you account for freight, insurance, duties, handling, and the risks each Incoterms term assigns to you versus them. The Incoterms normalization process converts mixed quotes to a true door-to-door comparison.

Quote comparability requires establishing a common basis before evaluating price. This means defining in your RFQ elements that make offers equivalent in the dimensions that materially affect supply and performance.

The RFQ scope that needs to be explicit

At minimum, the RFQ should make the following unambiguous:

A single Incoterms baseline. Specify which Incoterms 2020 rule applies, and require all suppliers to quote on that basis. If you need delivered pricing, say so. If you prefer FOB origin because you have preferred carriers, make that the baseline and handle freight separately. The goal is ensuring every quote includes—or excludes—the same scope elements.

Packaging configuration assumptions. How are boxes shipped to you—knocked down flat, or set up? On pallets, or floor-loaded? What pallet dimensions? How many units per pallet? Differences in these assumptions cascade into differences in freight costs, handling costs, and warehouse labor costs that the unit price doesn’t capture.

Included services. Artwork changes, plates and dies, sampling, testing documentation—each can be included or excluded, billed separately or bundled. Without clarity, quotes become incomparable.

Lead times, minimum order quantities, and changeover expectations. A lower unit price with 50,000-unit minimums and 8-week lead times may cost more operationally than a slightly higher price with 10,000-unit minimums and 3-week lead times—especially if your demand is variable or your storage space limited. Learn how to calculate landed cost to normalize quotes across different minimums and delivery terms.

Quality expectations and defect handling approach. What happens when something arrives off-spec? Replacement timelines, credit processes, and responsibility for return freight should be clarified before award, not negotiated during a crisis.

Landed cost components. If you’re importing, which party handles customs clearance? Who pays duties? Insurance? Drayage from port? Each ambiguity represents both a cost and a risk that should be visible before award, not discovered after.

“Comparability before cost”: a mini-checklist

Use this short checklist before looking at numbers:

- The same Incoterms baseline and delivery location are stated

- Board and performance language are specified using the same test naming

- Box style, dimensions, and tolerances match

- Bundle/pallet configuration assumptions match

- Sampling and documentation expectations match

- Change notification and substitution rules are stated

- Defect handling (replacement/credit process) is defined at a high level

Before comparing any prices, verify that quotes align on these fundamentals. The mindset of comparability before price eliminates the false confidence that comes from evaluating numbers that don’t actually describe the same thing.

Evidence Packs: Turning Supplier Claims Into Proof

“State-of-the-art facility.” “ISO certified.” “Decades of experience serving major brands.” Supplier marketing materials contain claims. Assurance-based sourcing requires converting those claims into documented evidence.

An evidence pack is simply the documentation a buyer requests to verify supplier capability before qualification—the foundation of the zero-trust sourcing model for overseas supplier verification through five evidence gates. Evidence packs reduce risk and shorten dispute cycles by agreeing, upfront, on what “capable” looks like and how it will be demonstrated.

What to request in an evidence pack

For corrugated box suppliers, an effective evidence pack includes:

Drawing and specification confirmation. The supplier returns your specification with their confirmation that they can meet each requirement—not just a verbal “yes,” but a marked-up document showing how their standard capabilities align with your needs, and flagging any elements that require special consideration.

Test reports with named methods. If you specify 32 ECT minimum, the evidence pack includes recent test reports from their facility or an accredited lab showing actual ECT results on comparable products, with the test method identified. “We test everything” is a claim. A test report with dates, sample sizes, and results is evidence. Where selection guidance is needed—deciding which test language to use for a given application—the practice-level framing under ASTM D5639 can be used as a reference point for method-selection vocabulary.

Process control documentation. How does the supplier ensure consistency? What incoming inspection do they perform on raw materials? What in-process checks occur during conversion? What final inspection gates exist before shipment? The answers reveal whether quality depends on individual vigilance or systemic controls.

Traceability artifacts. Can the supplier trace a finished box back to specific board lots, production dates, and machine settings? Traceability matters less during smooth operations and enormously during failure investigations. Lot coding, certificates of analysis where applicable, and production records that link finished goods to inputs demonstrate the capability.

Change notification commitment. Will the supplier notify you before changing materials, processes, or production locations? Undocumented changes are a primary source of specification drift. An explicit change notification policy—ideally documented in your purchase agreement—prevents surprises.

How suppliers should present evidence cleanly

For suppliers reading this, the evidence pack is an opportunity to differentiate. Buyers receive numerous quotes; most include claims, few include proof. Suppliers who proactively provide organized documentation—test reports, process descriptions, sample certifications—reduce buyer friction and demonstrate the operational maturity that justifies premium consideration.

A clean evidence pack usually has three traits:

- One-page summary that maps the buyer’s requirements to your controls—this demonstrates you understood the question before providing the answer.

- Organized artifacts with test reports, sign-offs, and process notes in one indexed folder. Scattered documentation signals scattered processes.

- Clear change rules that explain what you’ll treat as a “material change” requiring notification. This protects both parties and prevents misalignment from surfacing during production ramps.

The evidence pack isn’t bureaucratic overhead. It’s a sales tool that speaks louder than brochures. The trust protocol for supplier verification provides structured approaches for both sides.

Qualification Gates: Pilot Before Scale

New supplier qualification follows a staged approach: sample approval, pilot run, controlled ramp. Each gate serves a distinct purpose, and skipping gates invites the failures that the QC reject moment represents.

A practical three-gate model is often sufficient:

Sample approval verifies that a supplier can produce boxes meeting your specification. Samples should be production-representative—made on the equipment and from the materials that will run your orders, not hand-crafted by the quality lab for evaluation purposes. Evaluate samples against your documented acceptance criteria: confirm dimensions, fit, print, closure method, and basic workmanship. If your spec requires a 44 ECT heavy-duty grade, verify that the pilot samples resist the specified compression force. If dimensional tolerances matter, measure them. Document results.

Pilot run verifies that a supplier can produce your boxes consistently at order quantities. A perfect sample means little if the first production run varies wildly. Pilot runs should be large enough to exercise normal production variability—multiple pallets, not a single case. Run pilot quantities through your actual operations: filling, sealing, palletizing, shipping, handling at destination. The goal is exposing any gap between sample performance and production reality before volume commitments lock in.

Controlled ramp manages the transition from pilot success to full production volumes. Rather than shifting 100% of demand to a newly qualified supplier, ramp gradually—perhaps 20% of volume initially, increasing as performance data accumulates. This protects against the scenario where pilot success doesn’t predict scale performance, and maintains leverage if issues emerge.

What “pass” looks like

The packaging consistently meets the documented spec and tolerances; handling outcomes match the stated hazard assumptions; documentation and traceability are usable in practice; change notification behavior is demonstrated, not merely promised.

When a gate fails, the goal is not punishment—it’s learning. Identify whether the failure came from design, board selection, conversion quality, or lane assumptions, then correct the system before proceeding. The concept of piloting before scale applies across packaging materials—the same strategic corrugated box sourcing principles that prevent panic buying through two-lane verification and sampling on actual lines.

Monitoring Cadence: Preventing Drift

Qualification isn’t permanent. Suppliers change. Materials change. Production conditions change. Markets change, creating pressure to cut corners. Without ongoing monitoring, today’s qualified supplier becomes tomorrow’s QC reject source.

Effective monitoring includes three elements: periodic specification reviews, re-test triggers, and exception handling workflows.

Periodic specification reviews—quarterly works for most relationships—confirm that your documented specifications still reflect your actual requirements, and that supplier capabilities still align. Requirements evolve as products change, distribution channels shift, or performance expectations adjust. A specification frozen at initial qualification may no longer describe what you need.

Re-test triggers define when incoming inspection or periodic testing occurs. Full inspection of every delivery isn’t practical for most operations, but complete trust without verification invites drift. Common approaches include testing first shipments from each production lot, random sampling at defined frequencies, or mandatory testing when suppliers notify material or process changes. The acceptance criteria frameworks used for containerboard—setting ISO-named tolerances and AQL bands for triage decisions—apply equally to finished boxes..

Exception handling workflows document what happens when issues arise. Who gets notified? What investigation occurs? What corrective actions are required? What evidence confirms resolution? Without documented workflows, exceptions either become crises escalated to senior management, or get quietly absorbed until patterns compound into major failures. Neither outcome serves operational continuity.

A strategic sourcing system treats monitoring as ongoing, not occasional—a cadence rather than a reaction.

The Corrugated Box Sourcing Maturity Model

Where does your current sourcing approach fall? The following self-assessment maps capabilities across five domains from reactive (Level 1) to strategic (Level 5). This maturity model is designed to be used in a short working session with procurement, packaging engineering, and operations. Pick the best-fit level for each row. The lowest level across rows sets the “ceiling” for overall maturity and highlights the highest-leverage next step.

Five Maturity Levels

Level 1 — Reactive: sourcing is driven by urgent replenishment and short-term quotes

Level 2 — Basic Controls: core specs exist but leave room for interpretation; verification is inconsistent

Level 3 — Managed: scope alignment and qualification gates exist for key items; governance is defined

Level 4 — Verified: evidence packs and verification practices are standard; drift controls are active

Level 5 — Strategic: packaging is managed as a risk-control system; decisions are data-informed and repeatable

| Capability Domain | Level 1 — Reactive | Level 2 — Basic Controls | Level 3 — Managed | Level 4 — Verified | Level 5 — Strategic |

| Spec Definition | Dimensions only; informal assumptions | Basic drawing + limited performance language | Performance language tied to hazards; tolerances defined | Test naming and acceptance criteria referenced; change rules stated | Spec is maintained as a living control document across lanes and product mix |

| Scope Alignment for Offers | Mixed Incoterms and unclear delivery scope | Delivery point usually stated | Incoterms baseline and pack configuration written into RFQ | Normalized scope and documentation requirements standard | Two-scenario scope planning for lane changes and demand swings |

| Supplier Evidence | Marketing claims relied upon | Some documents provided on request | Evidence pack requested for key suppliers | Evidence pack is mandatory and organized; traceability is usable | Evidence is audited and used to manage drift and improvements |

| Performance Verification | Issues addressed after failures | Occasional tests in disputes | Pilot shipments used for key items | Verification practices tied to hazards; revalidation triggers defined | Verification is integrated into governance with clear ownership and cadence |

| Governance and Monitoring | Firefighting; no cadence | Ad-hoc reviews | Quarterly reviews for priority SKUs | Structured exception handling and periodic revalidation | Cross-functional governance; continuous learning, fewer surprise failures |

To Advance From Your Current Baseline, Focus On These Targeted Transitions:

If your assessment shows Level 1 (Reactive): Start with scope discipline. Freeze a short “minimum spec” template—dimensions, style, closure method, and basic hazard statement—and apply it to the next RFQ. The objective is to stop re-deciding fundamentals every time there’s a shortage or an urgent order. You cannot improve what you haven’t documented. Identify your single highest-risk supplier relationship and request a basic evidence pack.

If your assessment shows Level 2 (Basic Controls): Add a comparability gate before any award. Require a single Incoterms baseline, a shared pack configuration, and a defined documentation set. This reduces disputes quickly because suppliers are responding to the same scope. Standardize your RFQ template to document the landed cost components you need visibility into.

If your assessment shows Level 3 (Managed): Standardize evidence packs for new suppliers and for any supplier moving into higher volume. Require sign-off on the spec, a test-method naming reference, and basic process controls. This shifts onboarding from “trust-based” to “proof-based” without adding heavy bureaucracy. Define your incoming inspection or testing protocol—even if sampling-based rather than comprehensive.

If your assessment shows Level 4 (Verified): Harden drift controls. Define revalidation triggers and an exception handling workflow that assigns root cause using evidence, not guesswork. This is where assurance becomes durable across seasons, lanes, and product changes. Document your periodic review cadence. Establish change notification requirements with suppliers. Begin tracking quality metrics that enable trend analysis.

If your assessment shows Level 5 (Strategic): Use the system to drive continuous improvement. Treat packaging performance and supply continuity as operational risk controls, not negotiation outcomes. The focus becomes repeatability: fewer emergencies, fewer disputes, and a clearer basis for cross-functional decisions. Document institutional knowledge so the system survives personnel changes.

A Practical Starting Sequence

A mature assurance program can be built stepwise. A realistic starting sequence is:

- Align on a single hazard statement per product family—what is being protected against

- Update the spec template to include tolerances and change notification rules

- Normalize RFQ scope so offers are aligned on delivery assumptions and configuration

- Require evidence packs for any supplier entering pilot or volume

- Run the three qualification gates, then set the monitoring cadence

This sequence does not require complex tooling. It requires shared language and repeatable controls.

The Shift Toward A Structured Assurance System Represents A Fundamental Change In Procurement Philosophy

The gap between commodity thinking and assurance thinking isn’t about spending more money. It’s about spending attention differently—front-loading the work of specification, comparability, evidence, qualification, and monitoring rather than back-loading it as crisis response.

This failure at the point of receipt—the ‘moment of truth’ on the warehouse floor—is expensive. Beyond direct costs, why ‘cheap’ boxes cost more through hidden damage rate economics that compound monthly. Not just the direct cost of damaged goods, but the expedited replacements, the customer impact, the internal investigations, the supplier disputes, the erosion of trust in procurement’s judgment. Each failure consumes time and credibility that could have been invested in building systems.

When a shipment arrives damaged, the temptation is to treat it as a supplier problem or a one-time mishap. But the more useful interpretation is operational: the event exposed which assumptions were never written down, never verified, and never governed.

Assurance doesn’t guarantee perfection. Boxes will still occasionally fail. Suppliers will still occasionally disappoint. But failures within a governed system generate data and improvement, while failures within a transactional approach generate blame and repetition.

The framework exists. The controls are proven. The maturity model gives you a starting point and a direction. The shift from commodity thinking to assurance is not about perfection—it’s about control. When procurement defines requirements clearly, aligns scope, requests proof, pilots before scale, and monitors for drift, corrugated sourcing stops being a recurring emergency and becomes a repeatable capability.

For deeper exploration of sourcing frameworks, specification discipline, and supplier verification practices, PaperIndex Academy offers comprehensive guides developed for procurement professionals navigating these exact challenges. And when you’re ready to build or expand your qualified supplier base, browsing corrugated box suppliers provides a starting point for discovery—the first step in a process that, done right, replaces commodity assumptions with documented assurance.

Disclaimer:

Any standards, examples, or references in this article are for educational purposes only; buyers and suppliers should validate requirements for their specific products and distribution conditions.

Our Editorial Process:

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

About the PaperIndex Insights Team:

The PaperIndex Insights Team is our dedicated engine for synthesizing complex topics into clear, helpful guides. While our content is thoroughly reviewed for clarity and accuracy, it is for informational purposes and should not replace professional advice.