📌 Key Takeaways

AI helps paper buyers spot missing details in quotes before those gaps turn into costly disputes after the order ships.

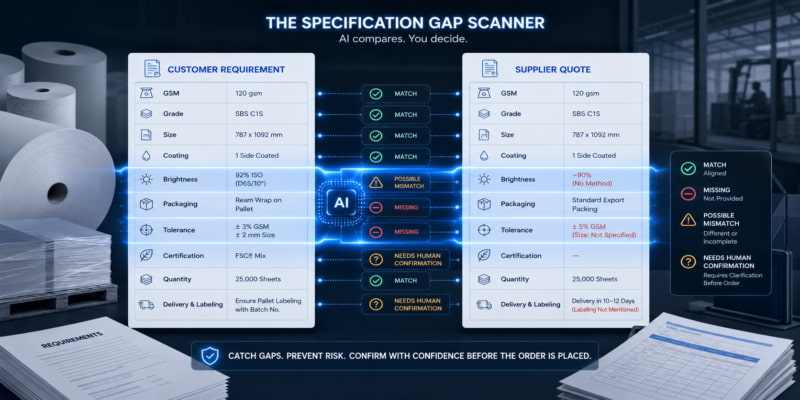

- Compare Fields, Not Feelings: Ask AI to check specific items—like GSM, size, coating, and packaging—side by side, instead of asking “does this quote look good?”

- Silence Isn’t Agreement: When a supplier’s offer skips a field like packaging or tolerance, that’s a gap to question—not a detail to assume is fine.

- Flag, Don’t Decide: AI can spot mismatches and missing info, but only the buyer or customer can approve whether a difference is acceptable.

- Vague Words Hide Risk: Phrases like “standard export packing” or “high brightness” without specifics should trigger a clarification question before order placement.

- Save the Paper Trail: Keep the comparison table, the questions you asked, and the answers you got—they protect you if a dispute comes up weeks later.

One structured review before you confirm the order prevents the rework and disputes that follow unreviewed gaps.

Paper buyers and procurement teams managing multiple quotes under time pressure will gain a repeatable pre-order checklist here, preparing them for the detailed overview that follows.

~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~

A paper quote may appear complete well before it is safe to place the order.

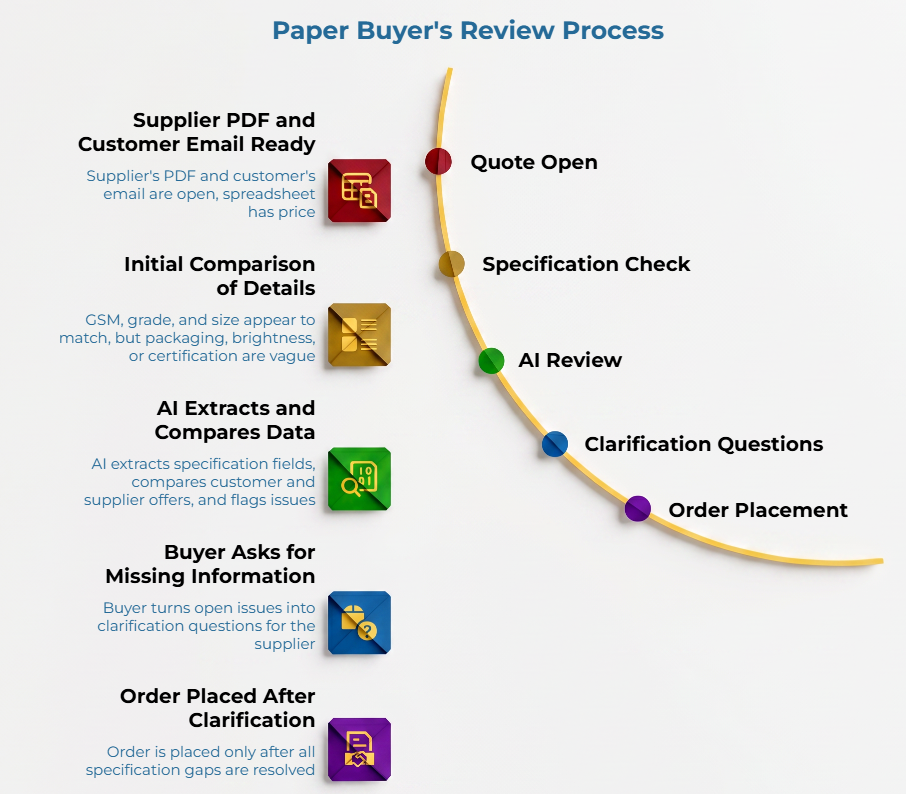

The supplier’s PDF is open, the customer’s email sits beside it, and the spreadsheet already has a price column filled in. GSM appears to match. Grade sounds familiar. Size looks close. Then one field is vague: packaging says “standard export packing,” brightness has no test context, or a certification is mentioned without a supporting document.

That is the moment paper buyers need a better review habit. You are not handing procurement judgment to AI. You are catching small specification gaps before they create back-and-forth, rework, non-conforming material, or fulfillment disputes.

AI is most useful as a comparison layer. It can extract specification fields, place customer requirements beside supplier offers, flag missing or conflicting details, and turn open issues into clarification questions. It should not be treated as proof of quality, compliance, certification status, or commercial acceptability.

Why Specification Gaps Create Trouble Before the Order Is Placed

A specification gap is the distance between what the customer needs and what the supplier has clearly offered.

That gap may be obvious, such as a different GSM. It may also be subtle, such as a supplier offering the right grade but failing to confirm roll width, core size, packing method, or allowable tolerance. More often, it is a chain of small assumptions that nobody isolates before confirmation.

Consider this scenario: A customer asks for 80 GSM coated paper in sheets, packed in ream-wrapped bundles. The supplier confirms “80 GSM coated paper” and gives the sheet size, but the packing line says only “standard export packing.” The offer may still be workable, but it is not fully matched. A buyer scanning multiple offers under time pressure may treat a near match as an exact match—a pattern explored further in our guide on why paper RFQs are hard to compare manually. A supplier may omit fields they consider standard. And when two teams read ‘coated kraft,’ they might picture entirely different products—because kraft paper grade terminology, coating descriptions, and brightness references are not used consistently across markets and mills.

These gaps surface after the order is placed—at the port, on the production floor, or in the customer’s inbox. At that point, the cost is not a quick email. It is a delay, a disputed shipment, or a damaged relationship.

This is where AI can help. Not by deciding the order. By forcing the missing field into view.

The Paper Specification Fields AI Should Check First

Start with the fields that decide whether both sides are describing the same material.

GSM refers to grammage, or the mass of paper per unit area. The official ISO 536 standard covers the determination of grammage for paper and board. Size also needs context. ISO 216 applies to trimmed sizes of writing paper and certain classes of printed matter, not every paper product. Brightness requires similar care; ISO 2470-1 covers ISO brightness measurement for white and near-white pulps, papers, and boards (using a D65 or C illuminant ).

| Field to check | What to compare | Example gap to flag | AI can help by | Who should confirm |

| GSM | Required grammage vs quoted grammage and tolerance | Customer requests 70 GSM; supplier states 70 GSM but omits tolerance | Extracting and comparing values; flagging missing tolerance or unit discrepancy | Buyer or customer |

| Grade | Customer grade name vs supplier description | “Virgin kraft” requested, “kraft” quoted without specifying composition | Highlighting terminology differences and vague or non-matching descriptions | Buyer and supplier |

| Size | Sheet, roll, width, length, or diameter | Customer specifies 700 × 1000 mm; supplier offers 70 × 100 cm without confirming trim direction | Identifying missing dimensions; flagging grain direction ambiguity | Supplier or operations team |

| Coating | Coated, uncoated, gloss, matte, barrier, or finish; type, side, weight | Customer asks for C1S with 12 g/m² coating; supplier confirms C1S but omits weight | Separating exact matches from vague matches; highlighting missing coating details | Customer or technical user |

| Brightness | Target value and measurement context | Brightness listed without method or specification basis | Flagging missing test context and absent test method references | Quality or technical team |

| Packaging | Ream, pallet, roll wrap, core, label, or export packing | “Standard packing” only | Asking for concrete packing details; identifying missing packaging fields | Buyer and supplier |

| Tolerance | Accepted variation vs supplier range | No tolerance stated in either document | Marking “needs confirmation”; flagging absent tolerance statements | Contract owner or customer |

| Certification | Claim, certificate, scope, and document | FSC mentioned without certificate evidence | Identifying unsupported mentions; detecting mentions without supporting documents | Buyer and supplier |

| Quantity and unit | Tonnes, rolls, sheets, reams, or pallets | Quantity stated in different units | Normalizing comparison fields | Commercial team |

| Delivery and labeling | Delivery terms, labels, markings, and timing | Customer requires euro pallets with lot-specific labels; supplier offer is silent | Generating clarification questions; flagging missing delivery or labeling fields | Operations team |

This table keeps the AI focused on discrete fields instead of subjective opinions. Without that structure, AI may produce a confident summary while missing the one blank cell that matters.

Packaging requirements deserve specific attention. They are often treated as secondary—even though mismatches in wrapping or palletization cause fulfillment problems just as easily as a GSM mismatch.

Tolerances and standards vary by grade, market, and contractual terms. Rather than assume a default, flag any gap for clarification.

For quote comparison beyond specifications, see our guide on how to standardize paper supplier quotes before using AI to compare them.

How AI Can Compare Customer Requirements with Supplier Offers

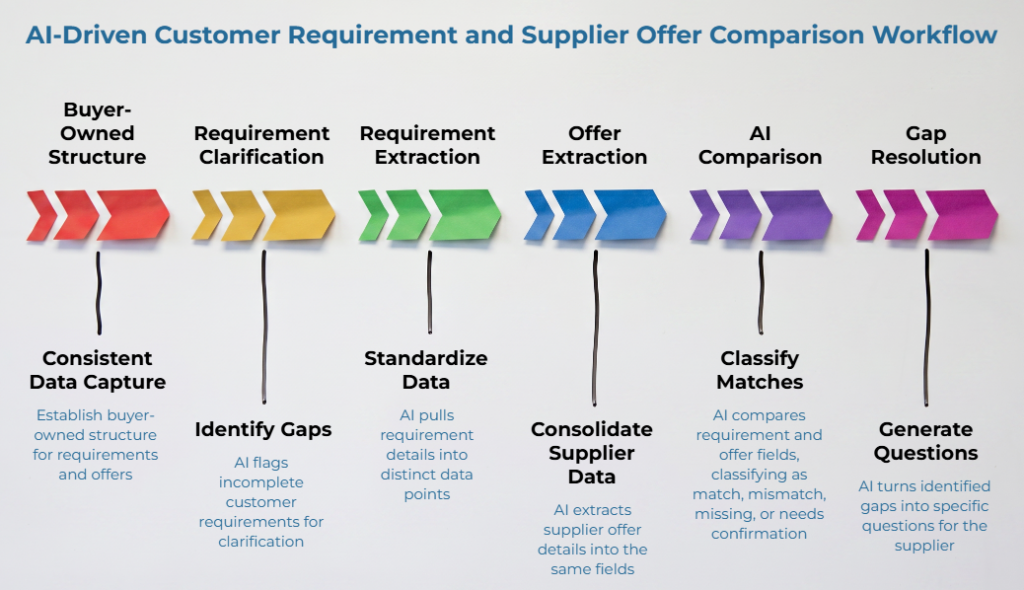

The best workflow begins with buyer-owned structure. The comparison does not require a specialized platform—it requires consistency across fields, even when the customer’s requirement arrives as an email and the supplier’s offer comes as a PDF.

An important starting point: customer requirements are not always complete. If the requirement says “80 GSM coated paper” and nothing else, there are gaps to clarify before comparing anything. AI should flag incomplete requirements with the same rigor applied to incomplete supplier offers.

Capture the customer requirement in one consistent format. Pull GSM, grade, size, coating, brightness, packaging, tolerances, certification requirements, quantity, delivery terms, and labeling into distinct data points. If the request uses shorthand or any field is missing or vague, mark it for clarification.

Extract the supplier offer into the same fields. Supplier details may arrive in a PDF, an email, a spreadsheet, or a scanned document. AI can collect those details into one table, but scanned or poorly formatted documents may create extraction errors. Treat the extraction as a draft, not a verified record.

Ask AI to classify each field as match, possible mismatch, missing, or needs human confirmation. The comparison should be literal, not interpretive. A field should not be marked as a match unless the supplier clearly provided the information and the values are identical or within a confirmed acceptable range.

Turn the gaps into questions. If packaging is missing, ask the supplier to confirm it. If brightness has no test context, ask which method or specification basis applies. If certification is mentioned, ask for the relevant documents.

As an illustrative example: a customer specifies 70 GSM uncoated woodfree, ISO brightness 92, with moisture-protective wrapping on Euro pallets. The supplier confirms 70 GSM woodfree and brightness 92—but omits the illuminant/test method (R457) and says nothing about packaging. AI flags both gaps for clarification before order placement.

A good AI review does not end with “approved.” It ends with a short list of unresolved items.

Standardizing these fields early ensures the comparison remains focused on objective data points rather than interpretation.

What AI Should Flag Before Order Confirmation

A useful AI review must be specific. Ask AI to flag missing fields such as packaging, tolerance, certification documents, roll details, or labeling. Flag conflicting values such as one GSM in the quote and another in a follow-up email. Flag ambiguous units where one document uses tonnes and another uses rolls. Flag near matches where the offer is close but not identical to the requirement. Flag vague supplier language such as “standard,” “premium,” “high brightness,” or “export quality” without supporting details. Flag certification is mentioned without documentation. Flag tolerance differences that need customer or contract review.

Here is an illustrative near-match scenario. A customer requests 80 GSM and the supplier offers 78 GSM without stating tolerance. AI should flag the gap. The buyer—not AI—decides whether the difference falls within an acceptable range. AI should not assess whether a near match is commercially acceptable. That judgment belongs to the buyer and the customer.

Where Human Review Still Matters

AI can compare statements. It cannot decide commercial acceptance.

The buyer must still confirm whether a difference is acceptable. A supplier must still confirm final offer details. A customer or technical user may still need to approve a tolerance, finish, packing method, or grade substitution. If certification is involved, AI can identify a claim, but it cannot verify it.

The Forest Stewardship Council describes FSC chain-of-custody certification as relating to materials tracked through the supply chain, from sourcing to distribution. PEFC states that chain-of-custody certification is available to companies that manufacture, process, trade, or sell forest-based products. In practice, certification review may depend on certificate scope, claim type, product relationship, invoice wording, and customer requirements. When grade descriptions are too vague to evaluate, the same principle applies: ask the supplier for specifics before accepting the offer.

| AI can flag | Human review must decide |

| A missing field | Whether the order can proceed without it |

| A conflicting value | Which document controls and whether the difference falls within contractual tolerance |

| A vague supplier phrase | What the supplier actually means |

| A certification mention | Whether documentation supports the claim and the certificate is valid, current, and applicable |

| A tolerance difference | Whether the variation is acceptable based on grade, end use, and contract terms |

| Packaging or labeling details absent | Whether the supplier’s standard packaging meets the customer’s fulfillment requirements |

This is not a limitation to work around. It is the safety mechanism.

A Simple Pre-Order AI Review Workflow

Use AI as a second-pass review.

Manual review catches many issues, but people get interrupted. A buyer may be comparing three supplier offers, answering a customer call, and checking freight terms in the same 27-minute window. When properly prompted and constrained, AI can generally evaluate the same field-by-field criteria across multiple reviews. A repeatable process keeps the review consistent across team members and orders—and the whole point is to surface what is missing, not just compare what is present.

Use this workflow. Build a buyer-owned requirement sheet using the fields from the specification gap review table—similar to the approach described in our guide on spec sheets that work for packaging paper converters. If the customer’s own requirements are incomplete, note the gaps—they need clarification too. Extract supplier details into the same fields. Ask AI to classify each field as match, possible mismatch, missing, or needs human confirmation. Require AI to cite the source text or document section for each extracted value. Convert every gap into a supplier or customer question. Save the comparison table and final confirmations with the order file—it reduces the back-and-forth that follows quote approval when gaps are caught late, and it is useful documentation if a dispute arises weeks after the order was placed.

A practical prompt could read:

“Compare the customer requirement and supplier offer field by field. Use only the information provided. Classify each field as Match, Possible Mismatch, Missing, or Needs Human Confirmation. Do not infer missing values. For each issue, write one clarification question and identify who should confirm it.”

Use approved AI tools only. Do not upload confidential customer, supplier, pricing, or contract information into unapproved systems.

For teams handling RFQs that are already hard to compare manually, adding this specification review step before order confirmation creates a practical second-pass safety net.

Common Mistakes When Using AI for Specification Checks

Even a well-structured AI review can produce unreliable results if the inputs or expectations are off.

The first mistake is asking for a general opinion. “Does this quote match?” is too broad. “Compare these 10 fields and flag missing values” gives AI a structure it can follow and produces output your team can act on.

The second mistake is treating blanks as approvals. If the supplier’s offer does not mention packaging, that is not confirmation—it is an open question. If tolerance is absent, it is absent. AI should never convert silence into acceptance.

The third mistake is ignoring units or test context. “80 brightness” without a test method is not the same as “80 ISO brightness per ISO 2470-1.” A prompt like “Does this offer look good?” gives AI too much room to generalize past these details.

The fourth mistake is accepting certification claims without chain-of-custody (CoC) validation. ‘FSC certified’ in an email is not the same as a valid certificate with a license number—buyers should know how to run a quick registry check for FSC/PEFC certificates before treating any mention as confirmation. AI flags the mention. The buyer requests the documents.

The fifth mistake is skipping the review trail. Save the first comparison, the clarification questions, the supplier answers, and the final accepted version. When a dispute appears weeks later, memory is a weak control. A documented comparison gives the buyer a clear record.

For price-focused quote evaluation, our guide on why price is only one part of AI-assisted quote comparison covers the strategy.

Frequently Asked Questions

Can AI verify that a supplier’s offer meets the customer’s requirement?

AI can compare the information provided in the requirement and offer. It can flag matches, gaps, conflicts, and unclear fields. Final acceptance still belongs with the buyer, supplier, customer, and any relevant quality or technical reviewer. AI structures the review—it does not make the acceptance decision.

Which paper specifications should buyers check first?

Start with GSM, grade, size, coating, brightness, packaging, tolerances, and supplier-provided certifications. These are the fields most likely to carry assumptions that only surface after the order is placed.

Can AI verify FSC, PEFC, or other certification claims?

AI can flag whether certification is mentioned and whether supporting documents appear to be included. It should not be treated as the verifier. Certificate scope, claim wording, transaction documents, and customer requirements need human review. Certification status should be verified through supplier-provided documentation and the relevant scheme’s chain-of-custody guidance—our guide on FSC vs PEFC claims in plain English explains what these labels actually prove and where they fall short.

Is this workflow useful for smaller buying teams?

A structured comparison workflow can be useful wherever buyers need a consistent second pass before order placement. Smaller teams—where one person handles multiple sourcing tasks under time pressure—often benefit most from a repeatable checklist that does not depend on memory. This structured approach acts as a systematic verification layer for time-sensitive procurement workflows, ensuring no field is overlooked during the final verification.

What should a buyer do when the offer is close but not exact?

Treat it as a possible mismatch. Ask the supplier or customer to confirm whether the difference is acceptable before placing the order. The friction you prevent now is the dispute you do not have to resolve later.

Use AI to Slow Down the Right Part of the Order Process

The goal is not to make procurement slower. The goal is to prevent avoidable friction before the order becomes harder to correct.

A paper quote may look complete because the familiar fields are present. The real risk often sits in the quiet spaces: an undefined tolerance, a vague packing phrase, a certification mention without evidence, or a near match that nobody has approved.

AI helps when it turns those quiet spaces into visible questions. It gives buyers a repeatable way to review what was requested, offered, missing, and still unconfirmed.

Before confirming the next order, use a structured specification checklist. Compare the fields. Flag the gaps. Confirm the open questions. That small pause can protect the order, the customer relationship, and the buyer’s credibility.

Disclaimer:

This content is educational and should not replace supplier documentation, customer approval, quality review, certification verification, or contract-specific advice. AI-assisted specification review should support, not replace, human commercial judgment. Buyers should verify supplier documentation, certifications, and tolerances through appropriate channels before confirming any order.

Our Editorial Process:

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

About the PaperIndex Insights Team:

The PaperIndex Insights Team is our dedicated engine for synthesizing complex topics into clear, helpful guides. While our content is thoroughly reviewed for clarity and accuracy, it is for informational purposes and should not replace professional advice.