📌 Key Takeaways

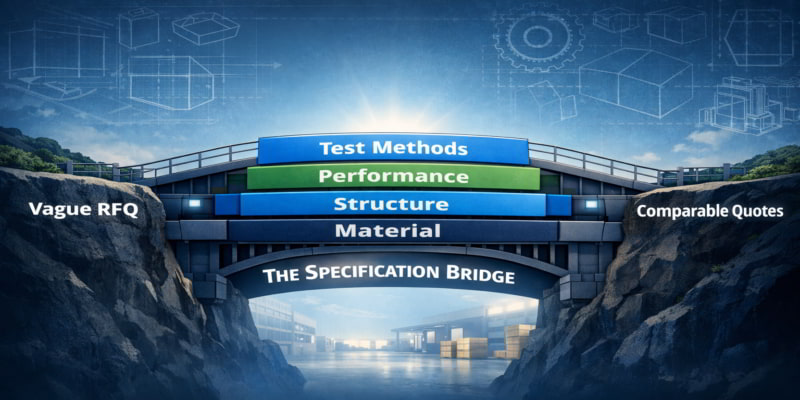

Supplier quotes look comparable but often aren’t—teams must define shared folding carton specifications before comparing prices.

- Define Before You Compare: A shared blueprint with clear fields, tolerances, and test methods stops suppliers from guessing what you want.

- Four Layers Lock It Down: Material, structure, performance, and test methods form the complete picture—missing any layer invites hidden mismatches.

- One Specification Works Until It Doesn’t: A single house specification stays valid only while all your products face similar filling, shipping, and storage conditions.

- Exceptions Need Rules: When products genuinely differ, document what changes, why it changes, and who approves—informal tweaks create chaos over time.

- Validate Before Quotes Arrive: Confirm your specifications reflect real line speeds, warehouse humidity, and pallet stacking before asking suppliers to respond.

Better definition beats louder negotiation.

Procurement managers, packaging engineers, and brand operations teams managing multi-product portfolios will find a practical alignment framework here, preparing them for the detailed specification guidance that follows.

~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~ ~

Executive Summary

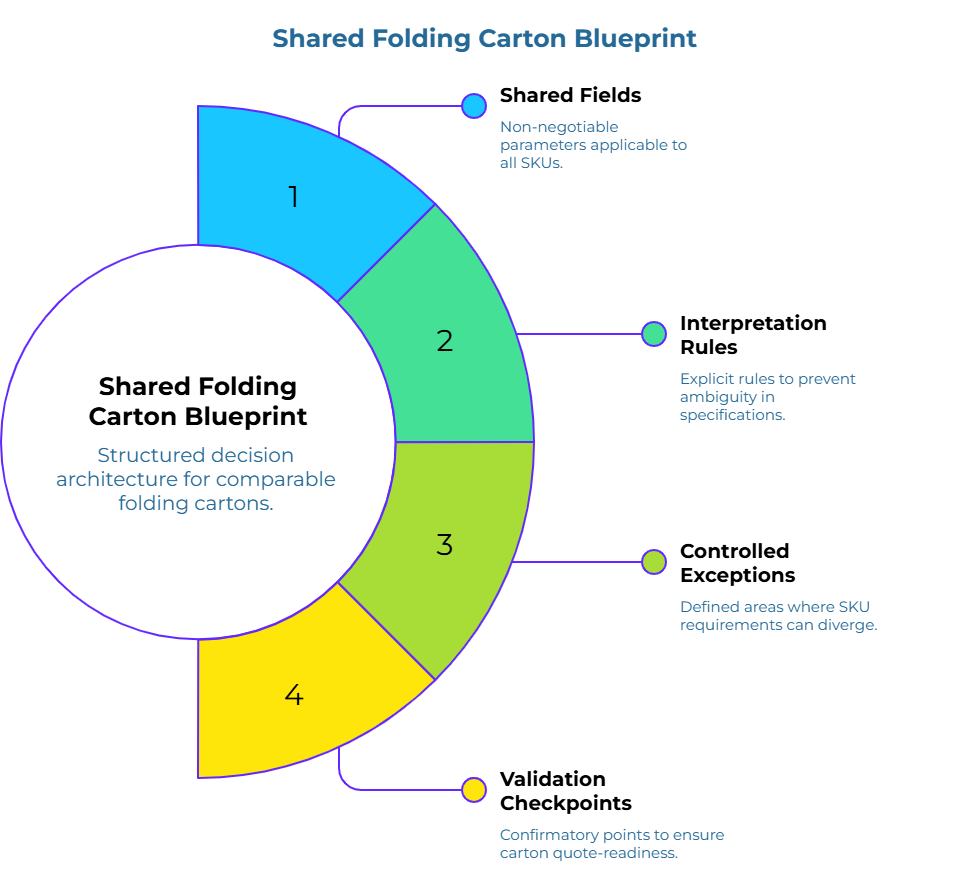

Teams think they are comparing supplier quotes. In reality, they are often comparing different interpretations of the same vague folding carton RFQ. The result is unreliable rankings, late-stage failures, and internal friction that could have been avoided. A shared folding carton blueprint solves this by defining minimum fields, interpretation rules, controlled exceptions, and validation checkpoints before supplier comparison begins. This guide shows how to build that blueprint.

Why Folding Carton Quotes Look Comparable When They Are Not

A packaging refresh is underway. Requests for quotations have gone out to suppliers you may find through online marketplaces. Quotes come back with similar-sounding descriptions: folding cartons, SBS board, standard dimensions. The prices differ, so the temptation is to rank on cost and move forward.

The spreadsheet is open. The dimensions look close. The folding carton names sound familiar. This is where weak requirement logic does its damage.

The quotes look comparable because the RFQ itself was vague. Suppliers filled in the gaps using their own assumptions about board caliper, coating type, tolerance bands, and test methods. Each quote reflects a different interpretation of the same placeholder description. The misconception that similar-sounding folding cartons create comparable quotes is widespread, but it rarely survives contact with real-world operations.

The problem surfaces later. One supplier delivers folding cartons that perform well on the filling line but fail in humid distribution environments. Another delivers folding cartons that meet burst-strength requirements but jam during automated erection. A third delivers on specification but interprets “standard dimensions” differently enough to cause retailer rejections.

While manufacturing variances occasionally occur, these late-stage failures generally do not trace back to bad suppliers. They predominantly trace back to a requirement or RFQ architecture that never existed.

This pattern repeats across SKU expansions, material transitions, and supplier consolidation efforts. Teams believe they are making apples-to-apples comparisons when the underlying definition was never explicit. The guesswork gap between what the buyer meant and what the supplier assumed remains invisible until something breaks.

A stronger or cheaper folding carton cannot solve a mismatch that started as a definition problem. Neither can louder negotiation. The fix begins earlier, with better definition. Reliance on vendor-led data sheets often exacerbates this drift, as definitions originating outside internal requirement logic allow for misaligned assumptions before the formal comparison begins.

What a Shared Folding Carton Blueprint Actually Needs to Define

A shared folding carton blueprint is not a product specification sheet. It is a structured decision architecture that defines the variables, tolerances, evidence requirements, and validation rules needed to determine whether folding cartons are comparable and fit for use across a SKU portfolio.

Think of it as a master drawing in construction. Every contractor, subcontractor, and inspector works from the same document. The same principle applies across packaging formats—whether you are sourcing folding cartons or applying a strategic framework for resilient corrugated box sourcing. Interpretation disputes become resolvable because the drawing defines what “compliant” means. Without that drawing, each party operates from their own mental model, and disagreements become matters of opinion rather than matters of fact.

In folding carton sourcing, the blueprint must define at least four things.

First, it must define the shared fields that apply across all SKUs in the portfolio. These are the non-negotiable parameters that every folding carton must satisfy regardless of product type.

Second, it must define the interpretation rules that prevent ambiguity. A field like “board grade” means nothing unless the measurement method, tolerance band, and acceptance threshold are explicit—a problem explored in depth in understanding why relying on supplier specifications undermines folding carton quality.

Third, it must define where controlled exceptions are permitted. Not every SKU will share identical requirements, and the blueprint must show where divergence is allowed and how it is governed.

Fourth, it must define the validation checkpoints that confirm a folding carton is quote-ready. A requirement that cannot be validated is not a requirement.

That definition becomes practical only when the team can follow it in sequence. A plain-language house-specification model usually works best:

- Define the shared base fields. Lock the common requirement fields that truly travel across the SKU family. Material definition, dimensions, structural construction, performance expectations, and test-method logic belong here.

- Set the tolerance logic. Decide which variables must stay tight and which can vary within named limits. A board grade without tolerance logic is still open to interpretation.

- Name the evidence pack. Decide what proof must accompany a response. Method-named test results, material declarations, samples, or supporting documents should be required where they matter.

- Create the exception path. Decide in advance which fields are allowed to vary when a SKU no longer fits the shared base model, and who has authority to approve those changes.

- Validate before comparison. Confirm that the shared definition still reflects fit-for-use, line behavior, and distribution realities before any supplier comparison begins.

When all four elements are explicit and the sequence is followed, the blueprint becomes a shared reference point. Procurement can compare quotes on a like-for-like basis. Engineering can assess fit-for-use confidence. Brand operations can manage complexity without losing consistency. The interpretive variance is eliminated.

The Core Field Map: Substrate, Structure, and Performance Metrics

Translating the blueprint concept into operational practice requires organizing requirement fields into logical groups. A four-layer model provides a practical starting point: material definition, structural construction, performance requirements, and test-method specification.

Material definition covers the substrate itself—the packaging paper or board that forms your folding carton’s foundation. This layer includes board grade, caliper range, basis weight, coating type, and fiber composition. Each field needs a target value and an acceptable tolerance band. A requirement like “SBS board” is incomplete. A requirement like “SBS board, 18 pt caliper ±0.5 pt, C1S coating” begins to close interpretation gaps. For guidance on setting these tolerance bands correctly, see how board grade tolerances prevent specification drift across suppliers.

Structural construction covers how the folding carton is built. This layer includes dimensions (length, width, depth), closure style, glue-flap configuration, and any die-cut features. Tolerances matter here as well. A dimension stated as “100 mm” invites interpretation. A dimension stated as “100 mm ±1.0 mm” provides a pass/fail boundary that both buyer and supplier can reference.

Performance requirements cover what the folding carton must do in real-world conditions. This layer includes burst strength, compression resistance, moisture resistance, and any barrier properties relevant to the product being packaged. Performance fields should reflect actual use scenarios: line speed, stacking height, distribution humidity, retail shelf conditions. A folding carton that passes a lab test but fails in the supply chain is not fit for use.

Test-method specification closes the final interpretation gap. Two suppliers can report the same burst-strength value and mean different things if they used different test methods. This principle of defining and enforcing specs through named test methods applies universally across packaging categories. The blueprint must name the test method (such as TAPPI T 807 for paperboard or ISO 2759), the sample conditioning protocol, and the acceptance threshold. Without this layer, the other three layers remain vulnerable to silent misalignment.

This layered approach works best when paired with a checklist mindset. For a deeper structure that organizes these parameters into a working checklist, see the baseline folding carton parameter checklist. The checklist helps move the conversation from vague descriptions to complete field groups, and it supports the standardization lens that matters most in multi-SKU work: basis weight, test methods, and tolerances need special attention because those are common sources of invisible drift.

When One House Specification Still Works

Not every portfolio needs a complex exception matrix. When SKUs share operational similarity, a single house specification remains defensible and efficient.

Operational similarity means the SKUs impose comparable demands on the folding carton. They run on the same filling line at similar speeds. They face similar distribution conditions. They sit in similar retail environments. Their weight ranges and product sensitivities overlap enough that one folding carton design can serve them all without overengineering or underperforming.

In this scenario, the blueprint can specify a single material grade, a single structural design, and a single performance band. Quotes from paper manufacturers and converters become straightforward to compare because every quote responds to the same requirement set. Internal alignment is simpler because there is one specification to review, not a matrix of variants.

The decision rule is practical. If the team can demonstrate that all SKUs in the portfolio fall within the same operational envelope, one house specification works. If even one SKU falls outside that envelope, the single-specification approach begins to carry risk.

The risk is not theoretical. A legacy folding carton specification usually stretches farther than it safely can, and the failure often appears late—after tooling is committed, after suppliers are selected, after the packaging refresh is supposed to be complete. The trouble starts when sameness becomes a habit instead of a test.

Reference the organizational cross-SKU material standards to ensure consistency without compromising functional requirements.

When Controlled Exceptions Become Necessary

Controlled exceptions are not a failure of standardization. They are evidence of governance discipline.

That point matters because many teams treat exceptions like a crack in the system. The opposite is usually true. A system without a clear exception path often hides variation until it becomes expensive.

When SKUs diverge in strength requirements, barrier needs, format constraints, or line-performance demands, the single house specification becomes a risky placeholder. One folding carton description is being asked to cover situations it was never designed for.

Consider a hypothetical portfolio expansion. The original SKUs are dry goods in moderate-weight folding cartons. The new SKUs are frozen products requiring moisture barriers and higher compression resistance. Such transitions benefit from a methodology-first approach to compliance verification before exception categories are defined. Forcing both into a single specification either overengineers the dry-goods folding cartons (adding cost without benefit) or underengineers the frozen-product folding cartons (creating field failures). Neither outcome is defensible.

The blueprint handles this by defining exception categories. An exception category is a documented divergence from the base specification with its own field values, tolerances, and validation rules. The base specification might cover 80% of the portfolio. Exception Category A covers the frozen products. Exception Category B covers an oversized format that requires different structural construction.

Each exception category must specify what changes, why it changes, and who owns the decision. A controlled exception should answer three questions: What remains common across the folding carton family? Which fields are allowed to vary for this SKU? Who owns the approval and maintenance of that variation?

Without this structure, exceptions accumulate informally. One stakeholder requests a heavier board. Another requests a different coating. Over time, the portfolio drifts into unmanaged complexity, and supplier comparison becomes unreliable again.

The blueprint transforms exceptions from ad-hoc adjustments into governed decisions. That transformation is what separates controlled complexity from chaotic complexity.

How to Normalize Interpretation Across Procurement, Engineering, and Brand Operations

A blueprint is only as strong as the number of teams reading it the same way.

A blueprint that lives in one department’s files is not a shared blueprint. It becomes shared only when each cross-functional stakeholder sees their priorities reflected in it.

Procurement needs quote comparability. The blueprint serves this need by defining fields precisely enough that supplier responses—whether from established partners or newly discovered vendors who list their companies on specialized marketplaces—can be evaluated on a like-for-like basis. When every quote responds to the same tolerances, test methods, and evidence requirements, ranking becomes defensible. Procurement no longer has to guess whether a lower price reflects a better offer or a different interpretation.

Engineering needs fit-for-use confidence. The blueprint serves this need by grounding performance requirements in real operating conditions—not just lab benchmarks. Engineering can assess whether a proposed folding carton will survive the filling line, the distribution network, and the retail environment. The four-layer field structure gives engineering a place to specify what matters technically without drowning procurement in unstructured detail.

Brand operations need manageable complexity and packaging consistency. The blueprint serves this need by limiting exception categories and documenting where divergence is permitted. Brand operations can see at a glance which SKUs share a common folding carton and which require controlled exceptions. This visibility prevents the slow drift toward unmanaged variants that undermines brand presentation and complicates inventory management.

Shared language helps here. Terms such as house specification, controlled exceptions, evidence pack, fit-for-use, and quote-ready folding carton definition are more than vocabulary choices. They are alignment tools. They reduce the number of meetings where people use the same words while defending different assumptions.

Normalization happens when all three functions review the blueprint before it becomes the basis for supplier outreach. A 45-minute alignment session can surface disagreements early, when they are cheap to resolve. Without that session, disagreements surface later—when quotes are already in hand and timeline pressure makes compromise feel mandatory.

The main tension in most organizations is between simplification for sourcing efficiency and technical precision for real-world fit-for-use. For a structured approach to resolving this tension, see how teams can align procurement and engineering priorities through shared checklists. The blueprint does not eliminate that tension. It makes the tension visible and governable. This is not just documentation hygiene. It is a matter of reviewing hygiene.

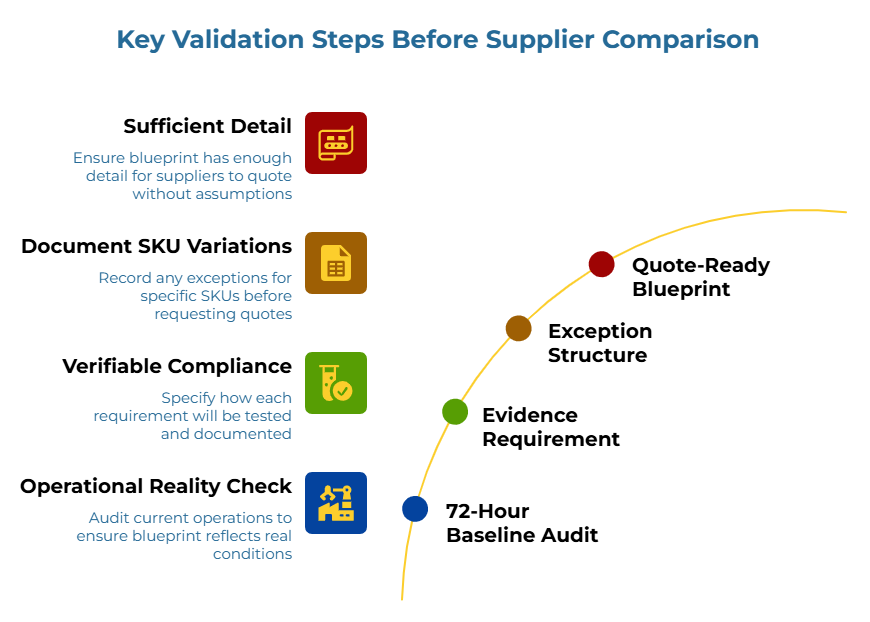

What to Validate Before Supplier Comparison Starts

Validation should begin before commercial comparison, not after it.

The first validation checkpoint asks whether the blueprint reflects real operating conditions. Requirements written in a conference room sometimes miss what actually happens on the line or in the warehouse. A structured 72-hour baseline audit can help teams ground specifications in operational reality before supplier outreach begins. A brief review with line operators, warehouse staff, or distribution partners can surface mismatches early. Does the specified compression resistance match actual pallet stacking? Does the moisture-barrier requirement reflect actual humidity exposure? Does the dimensional tolerance match actual filling-line clearances?

The second validation checkpoint asks whether each requirement can be evidenced. A requirement that cannot be tested or documented is not enforceable. The blueprint should specify not just what is required but how compliance will be demonstrated. Method-named test protocols, conditioning standards, and acceptance thresholds turn requirements into verifiable claims. This is the difference between a broad claim and a quote-ready folding carton definition.

The third validation checkpoint asks whether the exception structure is complete. If the team knows that certain SKUs will require different parameters, those exceptions should be documented before quotes are requested. Discovering exceptions after quotes arrive forces rework and delays.

The fourth validation checkpoint asks whether the blueprint is quote-ready. A quote-ready blueprint contains enough detail that suppliers can respond without making assumptions. If a supplier must guess, the resulting quote is not truly comparable to quotes from suppliers who guessed differently. The principle of spec-driven RFQs that combine technical specs and commercial terms demonstrates how this works in practice.

These checkpoints are not exhaustive. They are starting points that help teams catch the most common gaps before those gaps become expensive.

How to Use This Blueprint During Quote Review, Packaging Refreshes, and SKU Expansion

A strong blueprint should earn reuse. The test of a good blueprint is whether it becomes the natural reference point when decisions arise.

During quote review, the blueprint serves as the comparability filter. When you submit an RFQ with clear specifications, the quotes that arrive can be checked against the defined fields with confidence. Does this quote address the specified caliper range? Does it reference the correct test method? Does it include evidence for the required performance parameters? Quotes that leave fields unanswered or answer them differently than specified can be flagged for clarification before ranking begins. When you submit buying requirements with a complete specification blueprint, such gaps become immediately visible. This prevents the common situation where quotes look comparable on the surface but reflect different assumptions underneath.

During packaging refreshes, the blueprint serves as the change-control document. When a refresh is proposed, the team can assess which fields are affected. Does the refresh change material grade? Does it introduce a new exception category? Does it require updated validation protocols? The blueprint provides a structured way to evaluate impact instead of relying on memory or scattered documentation. Refresh projects often inherit legacy descriptions that feel settled only because nobody wants to reopen them. A blueprint gives the team a cleaner way to challenge stale assumptions without starting from zero.

During SKU expansion, the blueprint serves as the fit-assessment tool. When a new SKU is proposed, the team can check whether it falls within the existing operational envelope or requires a controlled exception. If it fits, the existing specification applies. If it does not fit, the exception process defines how to document the divergence. Either way, the decision is governed rather than improvised. That decision should happen before the market is asked to respond—early enough to prevent drift, early enough to preserve comparability.

Reuse is what transforms a one-time document into a durable decision architecture. The more often the blueprint is referenced, the more value it provides—and the more discipline it instills across the organization.

Ready to apply this framework? Browse folding carton suppliers on PaperIndex to begin supplier discovery with a clear specification in hand.

Orexplore folding carton listings to see what is available across the global marketplace.

For more educational resources, visit the PaperIndex Academy.

Disclaimer:

This article is for educational purposes only. All specifications, tolerances, and sourcing decisions should be verified independently. Consult qualified professionals for your specific requirements.

Our Editorial Process:

Our expert team uses AI tools to help organize and structure our initial drafts. Every piece is then extensively rewritten, fact-checked, and enriched with first-hand insights and experiences by expert humans on our Insights Team to ensure accuracy and clarity.

About the PaperIndex Insights Team:

The PaperIndex Insights Team is our dedicated engine for synthesizing complex topics into clear, helpful guides. While our content is thoroughly reviewed for clarity and accuracy, it is for informational purposes and should not replace professional advice.